by Joche Ojeda | Jan 12, 2026 | A.I, Copilot

I recently listened to an episode of the Merge Conflict podcast by James Montemagno and Frank Krueger where a topic came up that, surprisingly, I had never explicitly framed before: greenfield vs brownfield projects.

That surprised me—not because the ideas were new, but because I’ve spent years deep in software architecture and AI, and yet I had never put a name to something I deal with almost daily.

Once I did a bit of research (and yes, asked ChatGPT too), everything clicked.

Greenfield and Brownfield, in Simple Terms

- Greenfield projects are built from scratch. No legacy code, no historical baggage, no technical debt.

- Brownfield projects already exist. They carry history: multiple teams, different styles, shortcuts, and decisions made under pressure.

If that sounds abstract, here’s the practical version:

Greenfield is what we want.

Brownfield is what we usually get.

Greenfield Projects: Architecture Paradise

In a greenfield project, everything feels right.

You can choose your architecture and actually stick to it. If you’re building a .NET MAUI application, you can start with proper MVVM, SOLID principles, clean boundaries, and consistent conventions from day one.

As developers, we know how things should be done. Greenfield projects give us permission to do exactly that.

They’re also extremely friendly to AI tools.

When the rules are clear and consistent, Copilot and AI agents perform beautifully. You can define specs, outline patterns, and let the tooling do a lot of the repetitive work for you.

That’s why I often use AI for greenfield projects as internal tools or side projects—things I’ve always known how to build, but never had the time to prioritize. Today, time is no longer the constraint. Tokens are.

Brownfield Projects: Welcome to Reality

Then there’s the real world.

At the office, we work with applications that have been touched by many hands over many years—sometimes 10 different teams, sometimes freelancers, sometimes “someone’s cousin who fixed it once.”

Each left behind a different style, different patterns, and different assumptions.

Customers often describe their systems like this:

“One team built it, another modified it, then my cousin fixed a bug, then my cousin got married and stopped helping, and then someone else took over.”

And yet—the system works.

That’s an important reminder.

The main job of software is not to be beautiful. It’s to do the job.

A lot of brownfield systems are ugly, fragile, and terrifying to touch—but they deliver real business value every single day.

Why AI Is Even More Powerful in Brownfield Projects

Here’s my honest opinion, based on experience:

AI is even more valuable in brownfield projects than in greenfield ones.

I’ve modernized six or seven legacy applications so far—codebases that everyone was afraid to touch. AI made that possible.

Legacy systems are mentally expensive. Reading spaghetti code drains energy. Understanding implicit behavior takes time. Humans get tired.

AI doesn’t.

It will patiently analyze a 2,000-line class without complaining.

Take Windows Forms applications as an example. It’s old technology, easy to forget, and full of quirks. Copilot can generate code that I know how to write—but much faster than I could after years away from WinForms.

Even more importantly, AI makes it far easier to introduce tests into systems that never had them:

- Add tests class by class

- Mock dependencies safely

- Lock in existing behavior before refactoring

Historically, this was painful enough that many teams preferred a full rewrite.

But rewrites have a hidden cost: every rewritten line introduces new bugs.

AI allows us to modernize in place—incrementally and safely.

Clean Code and Business Value

This is the real win.

With AI, we no longer have to choose between:

- “The code works, but don’t touch it”

- “The code is beautiful, but nothing works yet”

We can improve structure, readability, and testability without breaking what already delivers value.

Greenfield projects are still fun. They’re great for experimentation and clean design.

But brownfield projects? That’s where AI feels like a superpower.

Final Thoughts

Today, I happily use AI in both worlds:

- Greenfield projects for fast experimentation and internal tooling

- Brownfield projects for rescuing legacy systems, adding tests, and reducing technical debt

AI doesn’t replace experience—it amplifies it.

Especially when dealing with systems held together by history, habits, and just enough hope to keep running.

And honestly?

Those are the projects where the impact feels the most real.

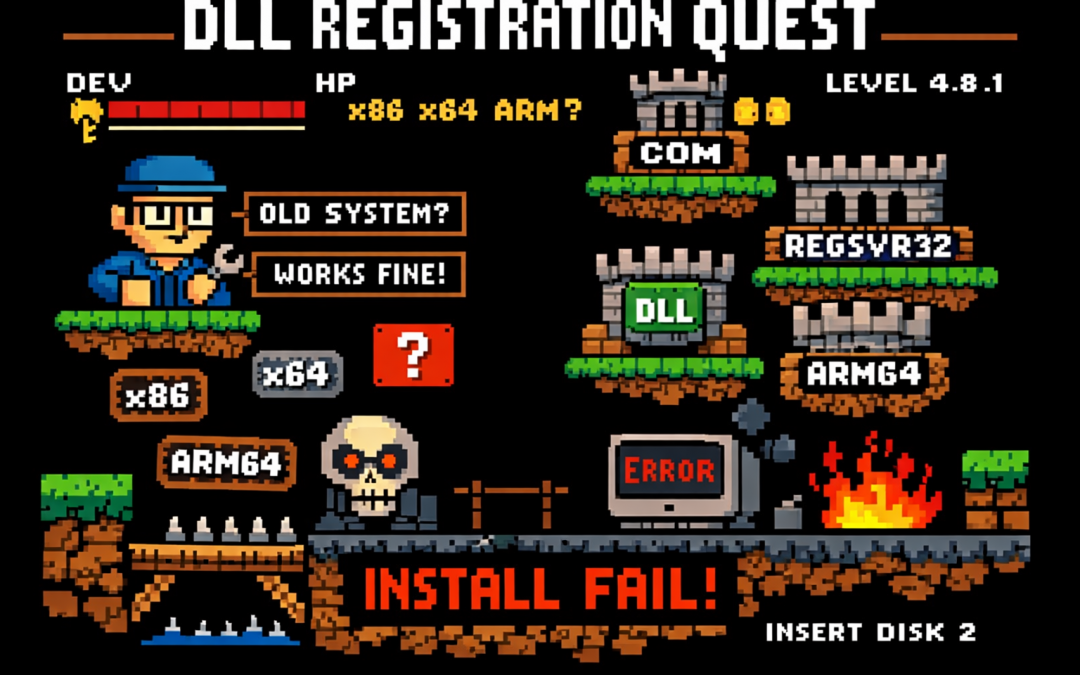

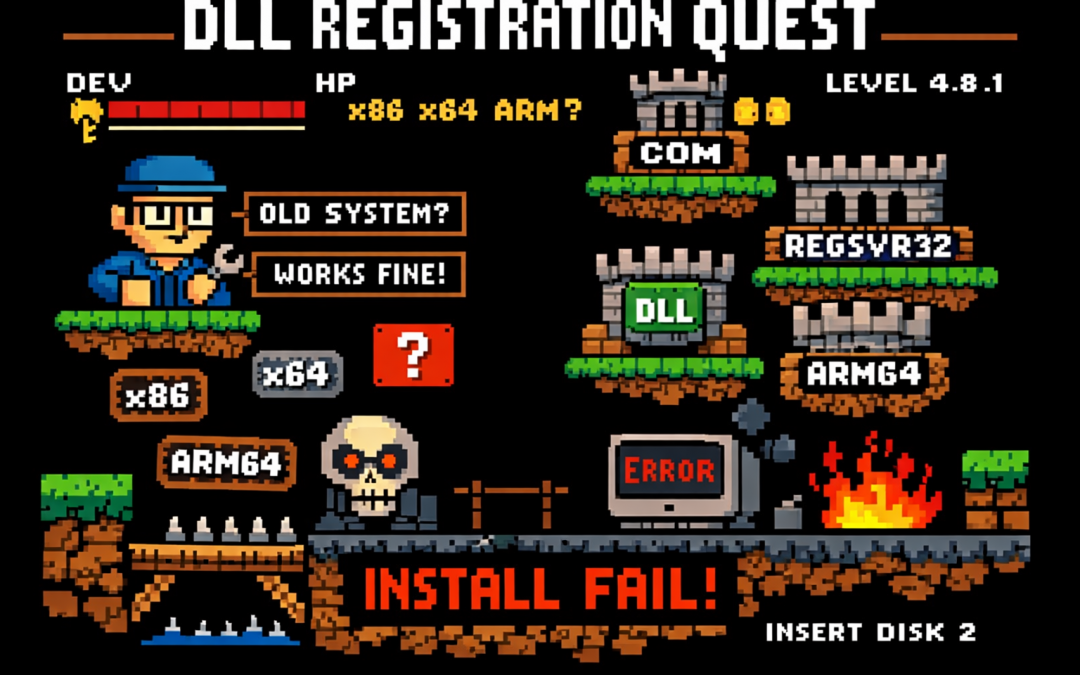

by Joche Ojeda | Jan 8, 2026 | netframework

If you’ve ever worked on a traditional .NET Framework application — the kind that predates .NET Core and .NET 5+ — this story may feel painfully familiar.

I’m talking about classic .NET Framework 4.x applications (4.0, 4.5, 4.5.1, 4.5.2, 4.6, 4.6.1, 4.6.2, 4.7, 4.7.1, 4.7.2, 4.8, and the final release 4.8.1). These systems often live long, productive lives… and accumulate interesting technical debt along the way.

This particular system is written in C# and relies heavily on COM components to render video, audio, and PDF content. Under the hood, many of these components are based on technologies like DirectShow filters, ActiveX controls, or other native COM DLLs.

And that’s where the story begins.

The Setup: COM, DirectShow, and Registration

Unlike managed .NET assemblies, COM components don’t just live quietly next to your executable. They need to be registered in the system registry so Windows knows:

- What CLSID they expose

- Which DLL implements that CLSID

- Whether it’s 32-bit or 64-bit

- How it should be activated

For DirectShow-based components (very common for video/audio playback in legacy apps), registration is usually done manually during development using regsvr32.

Example:

regsvr32 MyVideoFilter.dll

To unregister:

regsvr32 /u MyVideoFilter.dll

Important detail that bites a lot of people:

- 32-bit DLLs must be registered using:

C:\Windows\SysWOW64\regsvr32.exe My32BitFilter.dll

- 64-bit DLLs must be registered using:

C:\Windows\System32\regsvr32.exe My64BitFilter.dll

Yes — the folder names are historically confusing.

Development Works… Until It Doesn’t

So here’s the usual development flow:

- You register all required COM DLLs on your development machine

- Visual Studio runs the app

- Video plays, audio works, PDFs render

- Everyone is happy

Then comes the next step.

“Let’s build an installer.”

The Installer Paradox

This is where the real battle story begins.

Your application installer (MSI, InstallShield, WiX, Inno Setup — pick your poison) now needs to:

- Copy the COM DLLs

- Register them during installation

- Unregister them during uninstall

This seems reasonable… until you test it.

The Loop From Hell

Here’s what happens in practice:

- You install your app for testing

- The installer registers its own copies of the COM DLLs

- Your development environment was using different copies (maybe newer, maybe local builds)

- Suddenly:

- Your source build stops working

- Visual Studio debugging breaks

- Another app on your machine mysteriously fails

Then you:

- Uninstall the app

- The installer unregisters the DLLs

- Now nothing works anymore

So you re-register the DLLs manually for development…

…and the cycle repeats.

The Battle Story: It Only Worked… Until It Didn’t

For a long time, this system appeared to work just fine.

Video played. Audio rendered. PDFs opened. No obvious errors.

What we didn’t realize at first was a dangerous hidden assumption:

The system only worked on machines where a previous version had already been installed.

Those older installations had left COM DLLs registered in the system — quietly, globally, and invisibly.

So when we deployed a new version without removing the old one:

- Everything looked fine

- No one suspected missing registrations

- The system passed casual testing

The illusion broke the moment we tried a clean installation.

On a fresh machine — no previous version, no leftover registry entries — the application suddenly failed:

- Components didn’t initialize

- Media rendering silently broke

- COM activation errors appeared only in Event Viewer

The installer claimed it was registering the DLLs.

In reality, it wasn’t doing it correctly — or at least not in the way the application actually needed.

That’s when we realized we were standing on years of accidental state.

Why This Happens

The core problem is simple but brutal:

COM registration is global and mutable.

There is:

- One registry

- One CLSID mapping

- One “active” DLL per COM component

Your development environment, your installed application, and your installer are all fighting over the same global state.

.NET Framework itself isn’t the villain here — it’s just sitting on top of an old Windows integration model that predates modern isolation concepts.

A New Player Enters: ARM64

Just when we thought the problem space was limited to x86 vs x64, another variable entered the scene.

One of the development machines was ARM64.

Modern Windows on ARM adds a new layer of complexity:

- ARM64 native processes

- x64 emulation

- x86 emulation on top of ARM64

From the outside, everything looks like it’s running on x64.

Under the hood, it’s not that simple.

Why This Makes COM Registration Worse

COM registration is architecture-specific:

- x86 DLLs register under one view of the registry

- x64 DLLs register under another

- ARM64 introduces yet another execution context

On Windows ARM:

System32 contains ARM64 binariesSysWOW64 contains x86 binaries- x64 binaries often run through emulation layers

So now the questions multiply:

- Which

regsvr32 did the installer call?

- Was it ARM64, x64, or x86?

- Did the app run natively, or under emulation?

- Did the COM DLL match the process architecture?

The result is a system where:

- Some things work on Intel machines

- Some things work on ARM machines

- Some things only work if another version was installed first

At this point, debugging stops being logical and starts being archaeological.

Why This Is So Common in .NET Framework 4.x Apps

Many enterprise and media-heavy applications built on:

- .NET Framework 4.0–4.8.1

- WinForms or WPF

- DirectShow or ActiveX components

were designed in an era where:

- Global COM registration was normal

- Side-by-side isolation was rare

- “Just register the DLL” was accepted practice

These systems work, but they’re fragile — especially on developer machines.

Where the Article Is Going Next

In the rest of this article series, we’ll look at:

- Why install-time registration is often a mistake

- How to isolate development vs runtime environments

- Techniques like:

- Dedicated dev VMs

- Registration-free COM (where possible)

- App-local COM deployment

- Clear ownership rules for installers

- How to survive (and maintain) legacy .NET Framework systems without losing your sanity

If you’ve ever broken your own development environment just by testing your installer — you’re not alone.

This is the cost of living at the intersection of managed code and unmanaged history.

by Joche Ojeda | Jan 21, 2025 | Uncategorized

During my recent AI research break, I found myself taking a walk down memory lane, reflecting on my early career in data analysis and ETL operations. This journey brought me back to an interesting aspect of software development that has evolved significantly over the years: the management of shared libraries.

The VB6 Era: COM Components and DLL Hell

My journey began with Visual Basic 6, where shared libraries were managed through COM components. The concept seemed straightforward: store shared DLLs in the Windows System directory (typically C:\Windows\System32) and register them using regsvr32.exe. The Windows Registry kept track of these components under HKEY_CLASSES_ROOT.

However, this system had a significant flaw that we now famously know as “DLL Hell.” Let me share a practical example: Imagine you have two systems, A and B, both using Crystal Reports 7. If you uninstall either system, the other would break because the shared DLL would be removed. Version control was primarily managed by location, making it a precarious system at best.

Enter .NET Framework: The GAC Revolution

When Microsoft introduced the .NET Framework, it brought a sophisticated solution to these problems: the Global Assembly Cache (GAC). Located at C:\Windows\Microsoft.NET\assembly\ (for .NET 4.0 and later), the GAC represented a significant improvement in shared library management.

The most revolutionary aspect was the introduction of assembly identity. Instead of relying solely on filenames and locations, each assembly now had a unique identity consisting of:

- Simple name (e.g., “MyCompany.MyLibrary”)

- Version number (e.g., “1.0.0.0”)

- Culture information

- Public key token

A typical assembly full name would look like this:

MyCompany.MyLibrary, Version=1.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089

This robust identification system meant that multiple versions of the same assembly could coexist peacefully, solving many of the versioning nightmares that plagued the VB6 era.

The Modern Approach: Private Dependencies

Fast forward to 2025, and we’re living in what I call the “brave new world” of .NET for multi-operative systems. The landscape has changed dramatically. Storage is no longer the premium resource it once was, and the trend has shifted away from shared libraries toward application-local deployment.

Modern applications often ship with their own private version of the .NET runtime and dependencies. This approach eliminates the risks associated with shared components and gives applications complete control over their runtime environment.

Reflection on Technology Evolution

While researching Blazor’s future and seeing discussions about Microsoft’s technology choices, I’m reminded that technology evolution is a constant journey. Organizations move slowly in production environments, and that’s often for good reason. The shift from COM components to GAC to private dependencies wasn’t just a technical evolution – it was a response to real-world problems and changing resources.

This journey from VB6 to modern .NET reveals an interesting pattern: sometimes the best solution isn’t sharing resources but giving each application its own isolated environment. It’s fascinating how the decreasing cost of storage and increasing need for reliability has transformed our approach to dependency management.

As I return to my AI research, this trip down memory lane serves as a reminder that while technology constantly evolves, understanding its history helps us appreciate the solutions we have today and better prepare for the challenges of tomorrow.