by Joche Ojeda | Apr 29, 2026 | A.I, Local Model Adventures

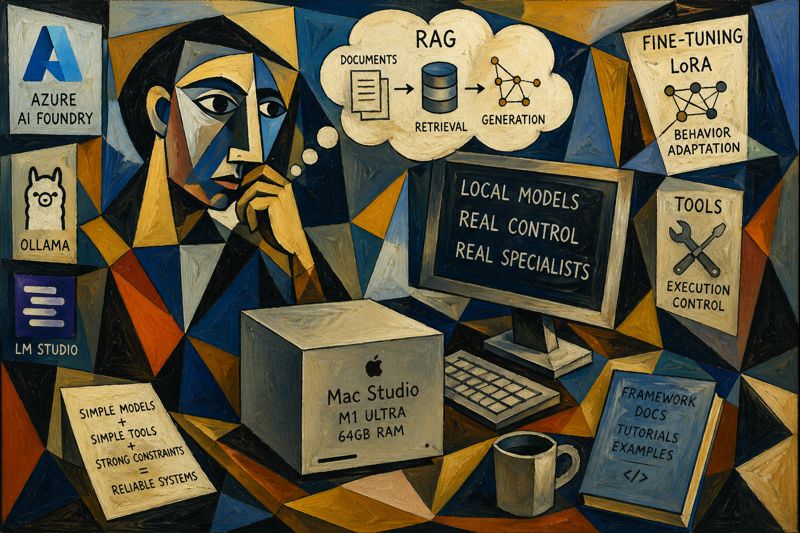

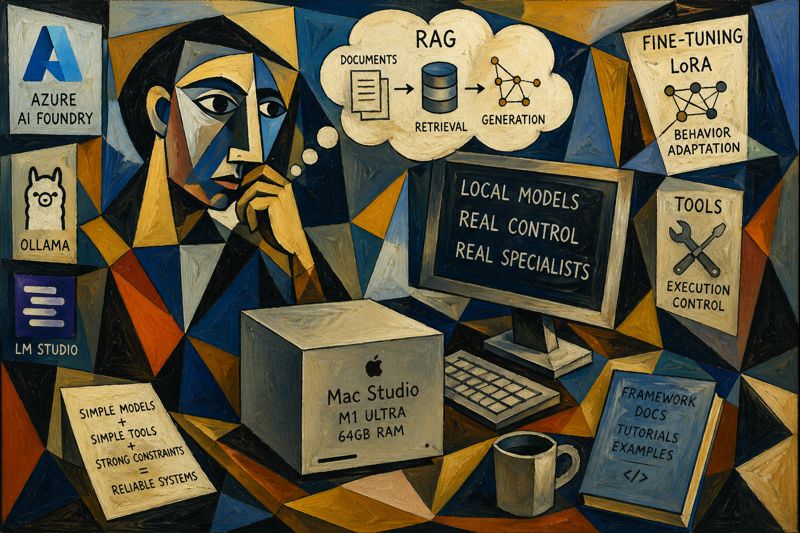

I was recently in Arizona and got my hands on a Mac Studio (M1 Ultra, 64GB RAM).

Naturally, I didn’t use it for video editing or music production. I used it for what actually matters:

Running local AI models and trying to bend them to my will.

The Setup

I went all-in and installed three main tools:

The goal was simple:

Try every possible way to make open-source models behave like something useful.

Not just chatting. Not just “vibe coding.” Actual controlled behavior.

The Reality Check

After working with frontier models like those from OpenAI and Anthropic, one thing becomes obvious:

They’re just better.

Not because of magic.

Because of:

- more training data

- better curated data

- more compute

- more iterations

- better eval loops

It’s not one thing. It’s everything combined.

You feel it immediately:

- better reasoning

- better language coverage

- better consistency

- fewer weird edge cases

So What’s the Point of Local Models?

This is where things get interesting.

Instead of trying to compete with frontier models, I flipped the approach:

Don’t build a general intelligence. Build a specialist.

The Core Idea: Single-Task Specialists

Instead of asking:

“How do I make this model as good as GPT or Claude?”

Ask:

“How do I make this model extremely good at ONE thing?”

That changes everything.

The Stack of Techniques

1. Base Model Selection

Small to mid-size models:

- 7B–8B range works best locally

2. RAG (Retrieval-Augmented Generation)

Inject knowledge at runtime:

- docs

- codebases

- tutorials

- architecture notes

3. Behavior Tuning (LoRA / fine-tuning)

Instead of teaching knowledge, teach patterns:

- how to respond

- how to structure outputs

- how to write code

- how to fix bugs

4. Tooling / Skills

Give the model bounded capabilities:

- limited toolset

- deterministic flows

- controlled execution

The Big Insight

The breakthrough for me was this:

Knowledge ≠ Behavior

You don’t train the model to know everything. You train it to behave correctly within a narrow domain.

Example: Framework Specialist

Let’s say you take a framework like DevExpress XAF.

You can build a local specialist by combining:

Knowledge Layer (RAG)

- official docs

- tutorials

- your internal patterns

- real-world examples

Behavior Layer (fine-tuning)

- “user asks → correct implementation”

- “buggy code → fixed code”

- “requirement → test → solution”

Tool Layer

- code generation

- validation scripts

- test runners

Now instead of a generic model, you get:

A XAF expert agent that runs locally.

Why This Works

Because you’re not fighting the limitations anymore.

You’re embracing them.

Small models:

- are faster

- are cheaper

- are controllable

- are predictable

And when you narrow the scope:

They become surprisingly good.

The Direction I’m Exploring

What really got my attention is this idea:

Simple models + simple tools + strong constraints = reliable systems

Not:

- giant models

- huge toolchains

- uncontrolled agents

But:

- small models

- few tools

- explicit rules

- tight loops

What’s Next

I’m going to keep experimenting and document everything:

- different models (Qwen, Llama, etc.)

- different training approaches (LoRA, no training, RAG-only)

- different tool strategies

- evaluation setups

- failure cases (this is the important part)

Final Thought

Frontier models are incredible.

But local models are hackable.

And that’s the real advantage.

You can shape them.

You can constrain them.

You can turn them into specialists.

And for real-world systems, that might actually be more valuable than raw intelligence.

by Joche Ojeda | Mar 26, 2026 | A.I

After discovering that tools were silently burning my context window, I had a new problem:

How do I keep powerful agents without paying the cost of massive schemas?

The answer did not come from AI.

It came from something much older.

👉 The command line.

🧠 The Problem with Traditional Tools

Most agent tools today are defined with JSON schemas, nested parameters, verbose descriptions, and strict typing.

They look clean to us as developers.

But to an LLM?

👉 They are heavy prompt payloads.

Every request includes:

- full schema

- all parameters

- all descriptions

Even if the user only says:

“order coffee”

💥 Why This Does Not Scale

Let’s say you have:

- 30 tools

- each with 800 tokens

That means:

👉 24,000 tokens before the user even speaks

And most of those tokens are:

- never used

- rarely relevant

- repeated every time

🔍 Rethinking Tools

So I asked myself:

Do tools really need to be JSON schemas?

Or…

👉 Do they just need to be understandable commands?

⚡ Enter CLI-Style Tools

Instead of this:

{

"name": "create_order",

"parameters": {

"userId": "...",

"productId": "...",

"quantity": "..."

}

}

You define tools like this:

create_order uid pid qty

Or even:

order coffee size=large sugar=0

🧬 Why This Works

Because LLMs are incredibly good at:

- parsing text

- understanding intent

- filling structured patterns

You do not need:

- deep JSON

- verbose schemas

- long descriptions

👉 You just need clear syntax

📉 Token Cost Comparison

JSON Tool

~800–1500 tokens

CLI Tool

~10–30 tokens

👉 That is a 50x–100x reduction

🧠 Less Structure, Better Reasoning

With CLI tools:

- fewer tokens means more room for conversation

- simpler format means easier decisions

- less noise means better accuracy

The model does not need to:

- parse nested JSON

- match schemas

- validate deep structures

It just:

👉 generates a command

⚙️ How It Looks in Practice

User says:

“I want a large coffee with no sugar”

Agent outputs:

order_coffee size=large sugar=0

Your backend:

- parses the string

- validates parameters

- executes the action

🧩 You Move Complexity Out of the Model

This is the key shift:

Before:

- the model handles structure

- the model handles validation

- the model handles formatting

After:

- the model generates intent

- your code handles everything else

👉 Which is exactly what we want as engineers

🚀 Benefits for Real Systems

If you are building something like:

- WhatsApp or Telegram agents

- multi-domain assistants

CLI tools give you:

- massive token savings

- faster responses

- lower cost per user

- better scalability

- simpler tool definitions

⚠️ Trade-offs

CLI tools are not magic.

You lose:

- strict schema validation in the prompt

- automatic argument formatting

- some guardrails

So you must:

- validate inputs in your backend

- handle errors gracefully

- design clean command syntax

🧠 Best Practices

1. Keep commands short

order_coffee

book_yoga

pay_invoice

2. Use key=value pairs

book_yoga date=2026-03-28 time=10:00

3. Avoid ambiguity

Bad:

order stuff

Good:

order_food item=pizza size=large

4. Build a parser layer in C#

This fits perfectly with a .NET stack:

- Regex or tokenizer

- map to DTO

- validate

- execute

5. Combine with routing

The best setup looks like this:

Router → Domain → CLI tools

Now you get:

- small toolsets

- tiny prompts

- efficient agents

💡 The Big Insight

After all this, I realized:

JSON schemas are for machines.

CLI commands are for language models.

🏁 Conclusion

The goal is not to remove tools.

The goal is to:

👉 make tools cheaper to think about

CLI-style tools:

- reduce tokens

- simplify reasoning

- scale better

And most importantly:

They let your agent focus on what actually matters — understanding the user.

by Joche Ojeda | Mar 26, 2026 | A.I

After testing OpenClaw, something clicked.

The future is not chat.

👉 The future is agents.

🛠️ Building the “Perfect” Agent

I started designing what I thought would be the ultimate assistant:

- General purpose

- Connected to everything

- Capable of doing real tasks

And to make that happen…

I built tools.

A lot of tools.

Not generic ones — very specific tools:

- booking flows

- ordering systems

- logistics

- payments

- daily life actions

Before I knew it…

👉 My agent had around 50 custom tools

And honestly, it felt powerful.

💡 The Business Idea

The plan was simple:

- Give users a few free tokens per day

- Let them try the agent

- Hook them with real utility

A classic freemium model.

💥 Reality Hit Immediately

What actually happened?

Users would send:

“Hello”

…and then…

👉 Quota exceeded

Not after a conversation.

Not after a task.

After the second request.

🤨 That Made No Sense

At first, I thought:

- Maybe there’s a bug

- Maybe token counting is wrong

- Maybe pricing is off

But everything checked out.

Still:

- almost no conversation

- almost no output

- quota gone

🧠 That’s When I Started Digging

So I did what we always do:

👉 I looked under the hood

And what I found changed how I think about agents completely.

🔍 The Hidden Cost of Tools

I realized something critical:

My agent wasn’t just sending messages.

It was sending all 50 tools on every request.

Every. Single. Time.

📦 What That Actually Means

Each tool had:

- name

- description

- parameters

- JSON schema

- nested objects

Individually? Fine.

Together?

👉 Massive.

So even a simple request like:

“Hello”

Was actually being processed like:

[system prompt]

[conversation]

[50 tool definitions]

[user: Hello]

🔥 I Was Burning Tokens Without Knowing

That’s when it clicked.

The user wasn’t paying for:

They were paying for:

👉 the entire toolset injected into the prompt

📉 Why My Quota Disappeared Instantly

Let’s do the math.

- each tool ≈ 600–1000 tokens

- I had ~50 tools

👉 I was sending 30,000–50,000 tokens per request

For a “Hello”.

No wonder the quota was gone after two messages.

😳 The Illusion of “Light Usage”

From the user’s perspective:

- they typed almost nothing

- they got almost nothing

From the system’s perspective:

👉 It processed a massive prompt

🧬 The Realization

That’s when I understood:

Tools are not just capabilities.

Tools are context weight.

Every tool:

- consumes tokens

- competes for attention

- increases cost

⚠️ The Bigger Problem

It wasn’t just cost.

The agent was also:

- slower

- less accurate

- sometimes picking the wrong tool

Because it had to:

👉 reason over 50 options every time

🧠 The Shift in Thinking

Before:

“More tools = smarter agent”

After:

“More tools = heavier prompt = worse performance”

🚀 What This Changed for Me

I stopped trying to build:

❌ One agent that does everything

And started designing:

✅ Systems that load only what’s needed

🧩 The New Approach

Instead of:

Agent → 50 tools

I moved to:

User → Router → Domain Agent → 5 tools

Now:

- smaller prompts

- lower cost

- better decisions

💡 Final Insight

That experience taught me something simple but powerful:

If your agent feels expensive, slow, or dumb…

check how many tools you’re injecting into the prompt.

Because sometimes:

👉 You’re not scaling intelligence

👉 You’re scaling tokens

🏁 Closing

That “Hello → quota exceeded” moment was frustrating.

But it revealed a fundamental truth about agents:

The problem is not how many tools you have.

The problem is how many you send every time.

And once you see that…

You start building agents very differently.

by Joche Ojeda | Feb 16, 2026 | A.I, Apps, CLI, Github Copilot, SDK

A strange week

This week I was going to the university every day to study Russian.

Learning a new language as an adult is a very humbling experience. One moment you are designing enterprise architectures, and the next moment you are struggling to say:

me siento bien

which in Russian is: я чувствую себя хорошо

So like any developer, I started cheating immediately.

I began using AI for everything:

- ChatGPT to review my exercises

- GitHub Copilot inside VS Code correcting my grammar

- Sometimes both at the same time

It worked surprisingly well. Almost too well.

At some point during the week, while going back and forth between my Russian homework and my development work, I noticed something interesting.

I was using several AI tools, but the one I kept returning to the most — without even thinking about it — was GitHub Copilot inside Visual Studio Code.

Not in the browser. Not in a separate chat window. Right there in my editor.

That’s when something clicked.

Two favorite tools

XAF is my favorite application framework. I’ve built countless systems with it — ERPs, internal tools, experiments, prototypes.

GitHub Copilot has become my favorite AI agent.

I use it constantly:

- writing code

- reviewing ideas

- fixing small mistakes

- even correcting my Russian exercises

And while using Copilot so much inside Visual Studio Code, I started thinking:

What would it feel like to have Copilot inside my own applications?

Not next to them. Inside them.

That idea stayed in my head for a few days until curiosity won.

The innocent experiment

I discovered the GitHub Copilot SDK.

At first glance it looked simple: a .NET library that allows you to embed Copilot into your own applications.

My first thought:

“Nice. This should take 30 minutes.”

Developers should always be suspicious of that sentence.

Because it never takes 30 minutes.

First success (false confidence)

The initial integration was surprisingly easy.

I managed to get a basic response from Copilot inside a test environment. Seeing AI respond from inside my own application felt a bit surreal.

For a moment I thought:

Done. Easy win.

Then I tried to make it actually useful.

That’s when the adventure began.

The rabbit hole

I didn’t want just a chatbot.

I wanted an agent that could actually interact with the application.

Ask questions. Query data. Help create things.

That meant enabling tool calling and proper session handling.

And suddenly everything started failing.

Timeouts. Half responses. Random behavior depending on the model. Sessions hanging for no clear reason.

At first I blamed myself.

Then my integration. Then threading. Then configuration.

Three or four hours later, after trying everything I could think of, I finally discovered the real issue:

It wasn’t my code.

It was the model.

Some models were timing out during tool calls. Others worked perfectly.

The moment I switched models and everything suddenly worked was one of those small but deeply satisfying developer victories.

You know the moment.

You sit back. Look at the screen. And just smile.

The moment it worked

Once everything was connected properly, something changed.

Copilot stopped feeling like a coding assistant and started feeling like an agent living inside the application.

Not in the IDE. Not in a browser tab. Inside the system itself.

That changes the perspective completely.

Instead of building forms and navigation flows, you start thinking:

What if the user could just ask?

Instead of:

- open this screen

- filter this grid

- generate this report

You imagine:

- “Show me what matters.”

- “Create what I need.”

- “Explain this data.”

The interface becomes conversational.

And once you see that working inside your own application, it’s very hard to unsee it.

Why this experiment mattered to me

This wasn’t about building a feature for a client. It wasn’t even about shipping production code.

Most of my work is research and development. Prototypes. Ideas. Experiments.

And this experiment changed the way I see enterprise applications.

For decades we optimized screens, menus, and workflows.

But AI introduces a completely different interaction model.

One where the application is no longer just something you navigate.

It’s something you talk to.

Also… Russian homework

Ironically, this whole experiment started because I was trying to survive my Russian classes.

Using Copilot to correct grammar. Using AI to review exercises. Switching constantly between tools.

Eventually that daily workflow made me curious:

What happens if Copilot is not next to my application, but inside it?

Sometimes innovation doesn’t start with a big strategy.

Sometimes it starts with curiosity and a small personal frustration.

What comes next

This is just the beginning.

Now that AI can live inside applications:

- conversations can become interfaces

- tools can be invoked by language

- workflows can become more flexible

We are moving from:

software you operate

to:

software you collaborate with

And honestly, that’s a very exciting direction.

Final thought

This entire journey started with a simple curiosity while studying Russian and writing code in the same week.

A few hours of experimentation later, Copilot was living inside my favorite framework.

And now I can’t imagine going back.

Note: The next article will go deep into the technical implementation — the architecture, the service layer, tool calling, and how I wired everything into XAF for both Blazor and WinForms.

by Joche Ojeda | Feb 11, 2026 | A.I

My last two articles have been about one idea: closing the loop with AI.

Not “AI-assisted coding.” Not “AI that helps you write functions.”

I’m talking about something else entirely.

I’m talking about building systems where the agent writes the code, tests the code, evaluates the result,

fixes the code, and repeats — without me sitting in the middle acting like a tired QA engineer.

Because honestly, that middle position is the worst place to be.

You get exhausted. You lose objectivity. And eventually you look at the project and think:

everything here is garbage.

So the goal is simple:

Remove the human from the middle of the loop.

Place the human at the end of the loop.

The human should only confirm: “Is this what I asked for?”

Not manually test every button.

The Real Question: How Do You Close the Loop?

There isn’t a single answer. It depends on the technology stack and the type of application you’re building.

So far, I’ve been experimenting with three environments:

- Console applications

- Web applications

- Windows Forms applications (still a work in progress)

Each one requires a slightly different strategy.

But the core principle is always the same:

The agent must be able to observe what it did.

If the agent cannot see logs, outputs, state, or results — the loop stays open.

Console Applications: The Easiest Loop to Close

Console apps are the simplest place to start.

My setup is minimal and extremely effective:

- Serilog writing structured logs

- Logs written to the file system

- Output written to the console

Why both?

Because the agent (GitHub Copilot in VS Code) can run the app, read console output, inspect log files,

decide what to fix, and repeat.

No UI. No browser. No complex state.

Just input → execution → output → evaluation.

If you want to experiment with autonomous loops, start here. Console apps are the cleanest lab environment you’ll ever get.

Web Applications: Where Things Get Interesting

Web apps are more complex, but also more powerful.

My current toolset:

- Serilog for structured logging

- Logs written to filesystem

- SQLite for loop-friendly database inspection

- Playwright for automated UI testing

Even if production uses PostgreSQL or SQL Server, I use SQLite during loop testing.

Not for production. For iteration.

The SQLite CLI makes inspection trivial.

The agent can call the API, trigger workflows, query SQLite directly, verify results, and continue fixing.

That’s a full feedback loop. No human required.

Playwright: Giving the Agent Eyes

For UI testing, Playwright is the key.

You can run it headless (fully autonomous) or with UI visible (my preferred mode).

Yes, I could remove myself completely. But I don’t.

Right now I sit outside the loop as an observer.

Not a tester. Not a debugger. Just watching.

If something goes completely off the rails, I interrupt.

Otherwise, I let the loop run.

This is an important transition:

From participant → to observer.

The Windows Forms Problem

Now comes the tricky part: Windows Forms.

Console apps are easy. Web apps have Playwright.

But desktop UI automation is messy.

Possible directions I’m exploring:

- UI Automation APIs

- WinAppDriver

- Logging + state inspection hybrid approach

- Screenshot-based verification

- Accessibility tree inspection

The goal remains the same: the agent must be able to verify what happened without me.

Once that happens, the loop closes.

What I’ve Learned So Far

1) Logs Are Everything

If the agent cannot read what happened, it cannot improve. Structured logs > pretty logs. Always.

2) SQLite Is the Perfect Loop Database

Not for production. For iteration. The ability to query state instantly from CLI makes autonomous debugging possible.

3) Agents Need Observability, Not Prompts

Most people focus on prompt engineering. I focus on observability engineering.

Give the agent visibility into logs, state, outputs, errors, and the database. Then iteration becomes natural.

4) Humans Should Validate Outcomes — Not Steps

The human should only answer: “Is this what I asked for?” That’s what the agent is for.

My Current Loop Architecture (Simplified)

Specification → Agent writes code → Agent runs app → Agent tests → Agent reads logs/db →

Agent fixes → Repeat → Human validates outcome

If the loop works, progress becomes exponential.

If the loop is broken, everything slows down.

My Question to You

This is still evolving. I’m refining the process daily, and I’m convinced this is how development will work from now on:

agents running closed feedback loops with humans validating outcomes at the end.

So I’m curious:

- What tooling are you using?

- How are you creating feedback loops?

- Are you still inside the loop — or already outside watching it run?

Because once you close the loop…

you don’t want to go back.

by Joche Ojeda | Feb 9, 2026 | A.I

I wrote my previous article about closing the loop for agentic development earlier this week, although the ideas themselves have been evolving for several days. This new piece is simply a progress report: how the approach is working in practice, what I’ve built so far, and what I’m learning as I push deeper into this workflow.

Short version: it’s working.

Long version: it’s working really well — but it’s also incredibly token-hungry.

Let’s talk about it.

A Familiar Benchmark: The Activity Stream Problem

Whenever I want to test a new development approach, I go back to a problem I know extremely well: building an activity stream.

An activity stream is basically the engine of a social network — posts, reactions, notifications, timelines, relationships. It touches everything:

- Backend logic

- UI behavior

- Realtime updates

- State management

- Edge cases everywhere

I’ve implemented this many times before, so I know exactly how it should behave. That makes it the perfect benchmark for agentic development. If the AI handles this correctly, I know the workflow is solid.

This time, I used it to test the closing-the-loop concept.

The Current Setup

So far, I’ve built two main pieces:

- An MCP-based project

- A Blazor application implementing the activity stream

But the real experiment isn’t the app itself — it’s the workflow.

Instead of manually testing and debugging, I fully committed to this idea:

The AI writes, tests, observes, corrects, and repeats — without me acting as the middleman.

So I told Copilot very clearly:

- Don’t ask me to test anything

- You run the tests

- You fix the issues

- You verify the results

To make that possible, I wired everything together:

- Playwright MCP for automated UI testing

- Serilog logging to the file system

- Screenshot capture of the UI during tests

- Instructions to analyze logs and fix issues automatically

So the loop becomes:

write → test → observe → fix → retest

And honestly, I love it.

My Surface Is Working. I’m Not Touching It.

Here’s the funny part.

I’m writing this article on my MacBook Air.

Why?

Because my main development machine — a Microsoft Surface laptop — is currently busy running the entire loop by itself.

I told Copilot to open the browser and actually execute the tests visually. So it’s navigating the UI, filling forms, clicking buttons, taking screenshots… all by itself.

And I don’t want to touch that machine while it’s working.

It feels like watching a robot doing your job. You don’t interrupt it mid-task. You just observe.

So I switched computers and thought: “Okay, this is a perfect moment to write about what’s happening.”

That alone says a lot about where this workflow is heading.

Watching the Loop Close

Once everything was wired together, I let it run.

The agent:

- Writes code

- Runs Playwright tests

- Reads logs

- Reviews screenshots

- Detects issues

- Fixes them

- Runs again

Seeing the system self-correct without constant intervention is incredibly satisfying.

In traditional AI-assisted development, you often end up exhausted:

- The AI gets stuck

- You explain the issue

- It half-fixes it

- You explain again

- Something else breaks

You become the translator and debugger for the model.

With a self-correcting loop, that burden drops dramatically. The system can fail, observe, and recover on its own.

That changes everything.

The Token Problem (Yes, It’s Real)

There is one downside: this workflow is extremely token hungry.

Last month I used roughly 700% more tokens than usual. This month, and we’re only around February 8–9, I’ve already used about 200% of my normal limits.

Why so expensive?

Because the loop never sleeps:

- Test execution

- Log analysis

- Screenshot interpretation

- Code rewriting

- Retesting

- Iteration

Every cycle consumes tokens. And when the system is autonomous, those cycles happen constantly.

Model Choice Matters More Than You Think

Another important detail: not all models consume tokens equally inside Copilot.

Some models count as:

- 3× usage

- 1× usage

- 0.33× usage

- 0× usage

For example:

- Some Anthropic models are extremely good for testing and reasoning

- But they can count as 3× token usage

- Others are cheaper but weaker

- Some models (like GPT-4 Mini or GPT-4o in certain Copilot tiers) count as 0× toward limits

At some point I actually hit my token limits and Copilot basically said: “Come back later.”

It should reset in about 24 hours, but in the meantime I switched to the 0× token models just to keep the loop running.

The difference in quality is noticeable.

The heavier models are much better at:

- Debugging

- Understanding logs

- Self-correcting

- Complex reasoning

The lighter or free models can still work, but they struggle more with autonomous correction.

So model selection isn’t just about intelligence — it’s about token economics.

Why It’s Still Worth It

Yes, this approach consumes more tokens.

But compare that to the alternative:

- Sitting there manually testing

- Explaining the same bug five times

- Watching the AI fail repeatedly

- Losing mental energy on trivial fixes

That’s expensive too — just not measured in tokens.

I would rather spend tokens than spend mental fatigue.

And realistically:

- Models get cheaper every month

- Tooling improves weekly

- Context handling improves

- Local and hybrid options are evolving

What feels expensive today might feel trivial very soon.

MCP + Blazor: A Perfect Testing Ground

So far, this workflow works especially well for:

- MCP-based systems

- Blazor applications

- Known benchmark problems

Using a familiar problem like an activity stream lets me clearly measure progress. If the agent can build and maintain something complex that I already understand deeply, that’s a strong signal.

Right now, the signal is positive.

The loop is closing. The system is self-correcting. And it’s actually usable.

What Comes Next

This article is just a status update.

The next one will go deeper into something very important:

How to design self-correcting mechanisms for agentic development.

Because once you see an agent test, observe, and fix itself, you don’t want to go back to manual babysitting.

For now, though:

The idea is working. The workflow feels right. It’s token hungry. But absolutely worth it.

Closing the loop isn’t theory anymore — it’s becoming a real development style.