by Joche Ojeda | Dec 31, 2023 | A.I

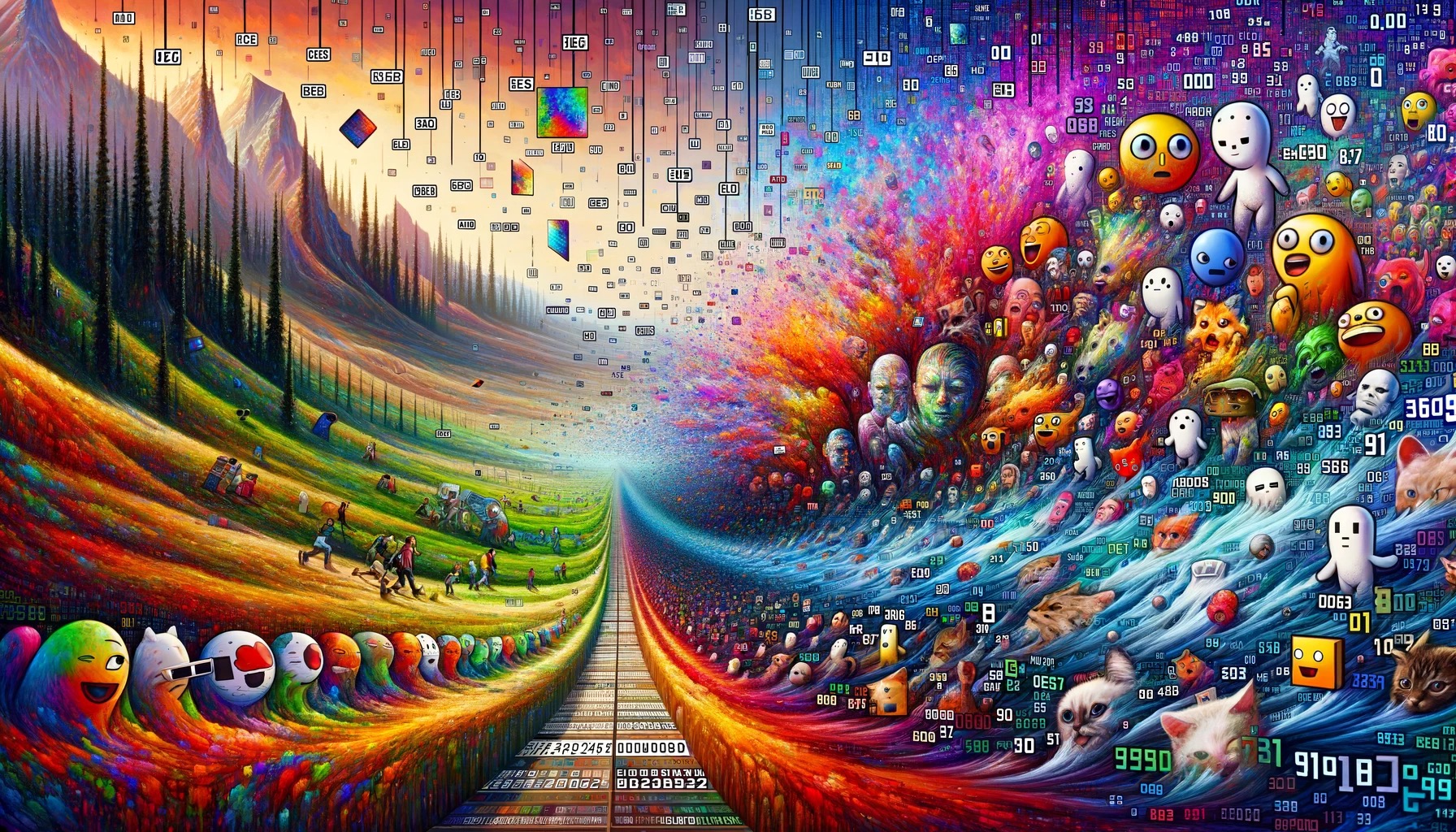

Unpacking Memes and AI Embeddings: An Intriguing Intersection

The Essence of Embeddings in AI

In the realm of artificial intelligence, the concept of an embedding is pivotal. It’s a method of converting complex, high-dimensional data like text, images, or sounds into a lower-dimensional space. This transformation captures the essence of the data’s most relevant features.

Imagine a vast library of books. An embedding is like a skilled librarian who can distill each book into a single, insightful summary. This process enables machines to process and understand vast swathes of data more efficiently and meaningfully.

The Meme: A Cultural Embedding

A meme is a cultural artifact, often an image with text, that encapsulates a collective experience, emotion, or idea in a highly condensed format. It’s a snippet of culture, distilled down to its most essential and relatable elements.

The Intersection: AI Embeddings and Memes

The connection between AI embeddings and memes lies in their shared essence of abstraction and distillation. Both serve as compact representations of more complex entities. An AI embedding abstracts media into a form that captures its most relevant features, just as a meme condenses an experience or idea into a simple format.

Implications and Insights

This intersection offers fascinating implications. For instance, when AI learns to understand and generate memes, it’s tapping into the cultural and emotional undercurrents that memes represent. This requires a nuanced understanding of human experiences and societal contexts – a significant challenge for AI.

Moreover, the study of memes can inform AI research, leading to more adaptable and resilient AI models.

Conclusion

In conclusion, while AI embeddings and memes operate in different domains, they share a fundamental similarity in their approach to abstraction. This intersection opens up possibilities for both AI development and our understanding of cultural phenomena.

by Joche Ojeda | Dec 18, 2023 | A.I

ONNX: Revolutionizing Interoperability in Machine Learning

The field of machine learning (ML) and artificial intelligence (AI) has witnessed a groundbreaking innovation in the form of ONNX (Open Neural Network Exchange). This open-source model format is redefining the norms of model sharing and interoperability across various ML frameworks. In this article, we explore the ONNX models, the history of the ONNX format, and the role of the ONNX Runtime in the ONNX ecosystem.

What is an ONNX Model?

ONNX stands as a universal format for representing machine learning models, bridging the gap between different ML frameworks and enabling models to be exported and utilized across diverse platforms.

The Genesis and Evolution of ONNX Format

ONNX emerged from a collaboration between Microsoft and Facebook in 2017, with the aim of overcoming the fragmentation in the ML world. Its adoption by major frameworks like TensorFlow and PyTorch was a key milestone in its evolution.

ONNX Runtime: The Engine Behind ONNX Models

ONNX Runtime is a performance-focused engine for running ONNX models, optimized for a variety of platforms and hardware configurations, from cloud-based servers to edge devices.

Where Does ONNX Runtime Run?

ONNX Runtime is cross-platform, running on operating systems such as Windows, Linux, and macOS, and is adaptable to mobile platforms and IoT devices.

ONNX Today

ONNX stands as a vital tool for developers and researchers, supported by an active open-source community and embodying the collaborative spirit of the AI and ML community.

ONNX and its runtime have reshaped the ML landscape, promoting an environment of enhanced collaboration and accessibility. As we continue to explore new frontiers in AI, ONNX’s role in simplifying model deployment and ensuring compatibility across platforms will be instrumental in advancing the field.

by Joche Ojeda | Dec 17, 2023 | A.I

In the dynamic world of artificial intelligence (AI) and machine learning (ML), diverse models such as ML.NET, BERT, and GPT each play a pivotal role in shaping the landscape of technological advancements. This article embarks on an exploratory journey to compare and contrast these three distinct AI paradigms. Our goal is to provide clarity and insight into their unique functionalities, technological underpinnings, and practical applications, catering to AI practitioners, technology enthusiasts, and the curious alike.

1. Models Created Using ML.NET:

- Purpose and Use Case: Tailored for a wide array of ML tasks, ML.NET is versatile for .NET developers for customized model creation.

- Technology: Supports a range of algorithms, from conventional ML techniques to deep learning models.

- Customization and Flexibility: Offers extensive customization in data processing and algorithm selection.

- Scope: Suited for varied ML tasks within .NET-centric environments.

2. BERT (Bidirectional Encoder Representations from Transformers):

- Purpose and Use Case: Revolutionizes language understanding, impacting search and contextual language processing.

- Technology: Employs the Transformer architecture for holistic word context understanding.

- Pre-trained Model: Extensively pre-trained, fine-tuned for specialized NLP tasks.

- Scope: Used for tasks requiring deep language comprehension and context analysis.

3. GPT (Generative Pre-trained Transformer), such as ChatGPT:

- Purpose and Use Case: Known for advanced text generation, adept at producing coherent and context-aware text.

- Technology: Relies on the Transformer architecture for subsequent word prediction in text.

- Pre-trained Model: Trained on vast text datasets, adaptable for broad and specialized tasks.

- Scope: Ideal for text generation and conversational AI, simulating human-like interactions.

Conclusion:

Each of these AI models – ML.NET, BERT, and GPT – brings unique strengths to the table. ML.NET offers machine learning solutions in .NET frameworks, BERT transforms natural language processing with deep language context understanding, and GPT models lead in text generation, creating human-like text. The choice among these models depends on specific project requirements, be it advanced language processing, custom ML solutions, or seamless text generation. Understanding these models’ distinctions and applications is crucial for innovative solutions and advancements in AI and ML.

by Joche Ojeda | Dec 17, 2023 | A.I

Machine Learning Model Formats and File Extensions

The realm of machine learning (ML) and artificial intelligence (AI) is marked by an array of model formats, each serving distinct purposes and ecosystems. The choice of a model format is a pivotal decision that can influence the development, deployment, and sharing of ML models. In this article, we aim to clarify the various model formats prevalent in the industry, highlighting their key characteristics, use cases, and associated file extensions. From ML.NET’s native binary format, known for its seamless integration with .NET applications, to the versatile and framework-agnostic ONNX format.

As we progress, we’ll explore each format in depth, providing you with a clear understanding of when and why to use each one. Whether you’re a seasoned data scientist, a budding ML developer, or an AI enthusiast, this guide will enhance your knowledge and proficiency in handling various ML model formats. Let’s embark on this informative journey together!

Model Formats

- ML.NET’s Native Binary Format:

- Used By: ML.NET framework.

- Characteristics: This format encapsulates the machine learning model and its entire data preprocessing pipeline, tailored for .NET applications.

- File Extension:

.zip

- Example Filename:

model.zip

- ONNX (Open Neural Network Exchange):

- Used By: Various platforms including ML.NET, PyTorch, TensorFlow.

- Characteristics: ONNX provides a framework-agnostic, cross-platform representation of machine learning models.

- File Extension:

.onnx

- Example Filename:

model.onnx

- HDF5 (Hierarchical Data Format version 5):

- Used By: Keras, TensorFlow.

- Characteristics: Designed for storing large amounts of numerical data, including model architecture, weights, and parameters.

- File Extension:

.h5, .hdf5

- Example Filename:

model.h5

- PMML (Predictive Model Markup Language):

- Used By: Platforms using R and Python.

- Characteristics: An XML-based format for representing data mining and statistical models.

- File Extension:

.xml, .pmml

- Example Filename:

model.pmml

- Pickle:

- Used By: Python, scikit-learn.

- Characteristics: Python-specific format for serializing and deserializing objects.

- File Extension:

.pkl, .pickle

- Example Filename:

model.pkl

- Protobuf (Protocol Buffers):

- Used By: TensorFlow and other frameworks.

- Characteristics: A binary serialization tool for structured data.

- File Extension:

.pb, .protobuf

- Example Filename:

model.pb

- JSON (JavaScript Object Notation):

- Used By: Various tools and platforms for storing configurations and parameters.

- Characteristics: Widely supported and readable format.

- File Extension:

.json

- Example Filename:

config.json

In conclusion each model format has its unique strengths and use cases, ranging from ML.NET’s binary format, ideal for .NET applications, to the cross-platform ONNX format, and the widely-used HDF5 format in deep learning frameworks. The choice of format often hinges on the project’s specific needs, such as performance, interoperability, and the nature of the AI and ML tasks at hand.

by Joche Ojeda | Dec 17, 2023 | A.I

In the world of machine learning (ML) and artificial intelligence (AI), “embeddings” refer to dense, low-dimensional, yet informative representations of high-dimensional data.

These representations are used to capture the essence of the data in a form that is more manageable for various ML tasks. Here’s a more detailed explanation:

What are Embeddings?

Definition: Embeddings are a way to transform high-dimensional data (like text, images, or sound) into a lower-dimensional space. This transformation aims to preserve relevant properties of the original data, such as semantic or contextual relationships.

Purpose: They are especially useful in natural language processing (NLP), where words, sentences, or even entire documents are converted into vectors in a continuous vector space. This enables the ML models to understand and process textual data more effectively, capturing nuances like similarity, context, and even analogies.

Creating Embeddings

Word Embeddings: For text, embeddings are typically created using models like Word2Vec, GloVe, or FastText. These models are trained on large text corpora and learn to represent words as vectors in a way that captures their semantic meaning.

Image and Audio Embeddings: For images and audio, embeddings are usually generated using deep learning models like convolutional neural networks (CNNs). These networks learn to encode the visual or auditory features of the input into a compact vector.

Training Process: Training an embedding model involves feeding it a large amount of data so that it learns a dense representation of the inputs. The model adjusts its parameters to minimize the difference between the embeddings of similar items and maximize the difference between embeddings of dissimilar items.

Differences in Embeddings Across Models

Dimensionality and Structure: Different models produce embeddings of different sizes and structures. For instance, Word2Vec might produce 300-dimensional vectors, while a CNN for image processing might output a 2048-dimensional vector.

Captured Information: The information captured in embeddings varies based on the model and training data. For example, text embeddings might capture semantic meaning, while image embeddings capture visual features.

Model-Specific Characteristics: Each embedding model has its unique way of understanding and encoding information. For instance, BERT (a language model) generates context-dependent embeddings, meaning the same word can have different embeddings based on its context in a sentence.

Transfer Learning and Fine-tuning: Pre-trained embeddings can be used in various tasks as a starting point (transfer learning). These embeddings can also be fine-tuned on specific tasks to better suit the needs of a particular application.

Conclusion

In summary, embeddings are a fundamental concept in ML and AI, enabling models to work efficiently with complex and high-dimensional data. The specific characteristics of embeddings vary based on the model used, the data it was trained on, and the task at hand. Understanding and creating embeddings is a crucial skill in AI, as it directly impacts the performance and capabilities of the models.