The Mirage of a Memory Leak (or: why “it must be the framework” is usually wrong)

There is a familiar moment in every developer’s life.

Memory usage keeps creeping up.

The process never really goes down.

After hours—or days—the application feels heavier, slower, tired.

And the conclusion arrives almost automatically:

“The framework has a memory leak.”

“That component library is broken.”

“The GC isn’t doing its job.”

It’s a comforting explanation.

It’s also usually wrong.

Memory Leaks vs. Memory Retention

In managed runtimes like .NET, true memory leaks are rare.

The garbage collector is extremely good at reclaiming memory.

If an object is unreachable, it will be collected.

What most developers call a “memory leak” is actually

memory retention.

- Objects are still referenced

- So they stay alive

- Forever

From the GC’s point of view, nothing is wrong.

From your point of view, RAM usage keeps climbing.

Why Frameworks Are the First to Be Blamed

When you open a profiler and look at what’s alive, you often see:

- UI controls

- ORM sessions

- Binding infrastructure

- Framework services

So it’s natural to conclude:

“This thing is leaking.”

But profilers don’t answer why something is alive.

They only show that it is alive.

Framework objects are usually not the cause — they are just sitting at the

end of a reference chain that starts in your code.

The Classic Culprit: Bad Event Wiring

The most common “mirage leak” is caused by events.

The pattern

- A long-lived publisher (static service, global event hub, application-wide manager)

- A short-lived subscriber (view, view model, controller)

- A subscription that is never removed

That’s it. That’s the leak.

Why it happens

Events are references.

If the publisher lives for the lifetime of the process, anything it

references also lives for the lifetime of the process.

Your object doesn’t get garbage collected.

It becomes immortal.

The Immortal Object: When Short-Lived Becomes Eternal

An immortal object is an object that should be short-lived

but can never be garbage collected because it is still reachable from a GC

root.

Not because of a GC bug.

Not because of a framework leak.

But because our code made it immortal.

Static fields, singletons, global event hubs, timers, and background services

act as anchors. Once a short-lived object is attached to one of these, it

stops aging.

GC Root

└── static / singleton / service

└── Event, timer, or callback

└── Delegate or closure

└── Immortal object

└── Large object graph

From the GC’s perspective, everything is valid and reachable.

From your perspective, memory never comes back down.

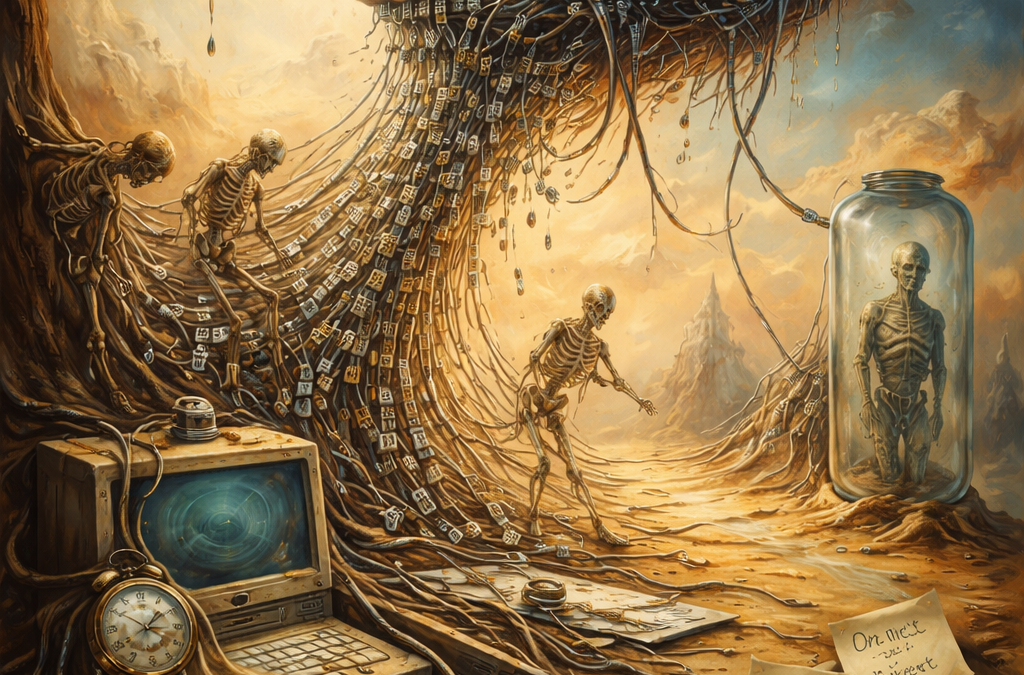

A Retention Dependency Tree That Cannot Be Collected

GC Root

└── static GlobalEventHub.Instance

└── GlobalEventHub.DataUpdated (event)

└── delegate → CustomerViewModel.OnDataUpdated

└── CustomerViewModel

└── ObjectSpace / DbContext

└── IdentityMap / ChangeTracker

└── Customer, Order, Invoice, ...

What you see in the memory dump:

- thousands of entities

- ORM internals

- framework objects

What actually caused it:

- one forgotten event unsubscription

The Lambda Trap (Even Worse, Because It Looks Innocent)

The code

public CustomerViewModel(GlobalEventHub hub)

{

hub.DataUpdated += (_, e) =>

{

RefreshCustomer(e.CustomerId);

};

}

This lambda captures this implicitly.

The compiler creates a hidden closure that keeps the instance alive.

“But I Disposed the Object!”

Disposal does not save you here.

- Dispose does not remove event handlers

- Dispose does not break static references

- Dispose does not stop background work automatically

IDisposable is a promise — not a magic spell.

Leak-Hunting Checklist

Reference Roots

- Are there static fields holding objects?

- Are singletons referencing short-lived instances?

- Is a background service keeping references alive?

Events

- Are subscriptions always paired with unsubscriptions?

- Are lambdas hiding captured references?

Timers & Async

- Are timers stopped and disposed?

- Are async loops cancellable?

Profiling

- Follow GC roots, not object counts

- Inspect retention paths

- Ask: who is holding the reference?

Final Thought

Frameworks rarely leak memory.

We do.

Follow the references.

Trust the GC.

Question your wiring.

That’s when the mirage finally disappears.