by Joche Ojeda | Feb 16, 2026 | A.I, Apps, CLI, Github Copilot, SDK

A strange week

This week I was going to the university every day to study Russian.

Learning a new language as an adult is a very humbling experience. One moment you are designing enterprise architectures, and the next moment you are struggling to say:

me siento bien

which in Russian is: я чувствую себя хорошо

So like any developer, I started cheating immediately.

I began using AI for everything:

- ChatGPT to review my exercises

- GitHub Copilot inside VS Code correcting my grammar

- Sometimes both at the same time

It worked surprisingly well. Almost too well.

At some point during the week, while going back and forth between my Russian homework and my development work, I noticed something interesting.

I was using several AI tools, but the one I kept returning to the most — without even thinking about it — was GitHub Copilot inside Visual Studio Code.

Not in the browser. Not in a separate chat window. Right there in my editor.

That’s when something clicked.

Two favorite tools

XAF is my favorite application framework. I’ve built countless systems with it — ERPs, internal tools, experiments, prototypes.

GitHub Copilot has become my favorite AI agent.

I use it constantly:

- writing code

- reviewing ideas

- fixing small mistakes

- even correcting my Russian exercises

And while using Copilot so much inside Visual Studio Code, I started thinking:

What would it feel like to have Copilot inside my own applications?

Not next to them. Inside them.

That idea stayed in my head for a few days until curiosity won.

The innocent experiment

I discovered the GitHub Copilot SDK.

At first glance it looked simple: a .NET library that allows you to embed Copilot into your own applications.

My first thought:

“Nice. This should take 30 minutes.”

Developers should always be suspicious of that sentence.

Because it never takes 30 minutes.

First success (false confidence)

The initial integration was surprisingly easy.

I managed to get a basic response from Copilot inside a test environment. Seeing AI respond from inside my own application felt a bit surreal.

For a moment I thought:

Done. Easy win.

Then I tried to make it actually useful.

That’s when the adventure began.

The rabbit hole

I didn’t want just a chatbot.

I wanted an agent that could actually interact with the application.

Ask questions. Query data. Help create things.

That meant enabling tool calling and proper session handling.

And suddenly everything started failing.

Timeouts. Half responses. Random behavior depending on the model. Sessions hanging for no clear reason.

At first I blamed myself.

Then my integration. Then threading. Then configuration.

Three or four hours later, after trying everything I could think of, I finally discovered the real issue:

It wasn’t my code.

It was the model.

Some models were timing out during tool calls. Others worked perfectly.

The moment I switched models and everything suddenly worked was one of those small but deeply satisfying developer victories.

You know the moment.

You sit back. Look at the screen. And just smile.

The moment it worked

Once everything was connected properly, something changed.

Copilot stopped feeling like a coding assistant and started feeling like an agent living inside the application.

Not in the IDE. Not in a browser tab. Inside the system itself.

That changes the perspective completely.

Instead of building forms and navigation flows, you start thinking:

What if the user could just ask?

Instead of:

- open this screen

- filter this grid

- generate this report

You imagine:

- “Show me what matters.”

- “Create what I need.”

- “Explain this data.”

The interface becomes conversational.

And once you see that working inside your own application, it’s very hard to unsee it.

Why this experiment mattered to me

This wasn’t about building a feature for a client. It wasn’t even about shipping production code.

Most of my work is research and development. Prototypes. Ideas. Experiments.

And this experiment changed the way I see enterprise applications.

For decades we optimized screens, menus, and workflows.

But AI introduces a completely different interaction model.

One where the application is no longer just something you navigate.

It’s something you talk to.

Also… Russian homework

Ironically, this whole experiment started because I was trying to survive my Russian classes.

Using Copilot to correct grammar. Using AI to review exercises. Switching constantly between tools.

Eventually that daily workflow made me curious:

What happens if Copilot is not next to my application, but inside it?

Sometimes innovation doesn’t start with a big strategy.

Sometimes it starts with curiosity and a small personal frustration.

What comes next

This is just the beginning.

Now that AI can live inside applications:

- conversations can become interfaces

- tools can be invoked by language

- workflows can become more flexible

We are moving from:

software you operate

to:

software you collaborate with

And honestly, that’s a very exciting direction.

Final thought

This entire journey started with a simple curiosity while studying Russian and writing code in the same week.

A few hours of experimentation later, Copilot was living inside my favorite framework.

And now I can’t imagine going back.

Note: The next article will go deep into the technical implementation — the architecture, the service layer, tool calling, and how I wired everything into XAF for both Blazor and WinForms.

by Joche Ojeda | Dec 23, 2025 | ADO, ADO.NET, XPO

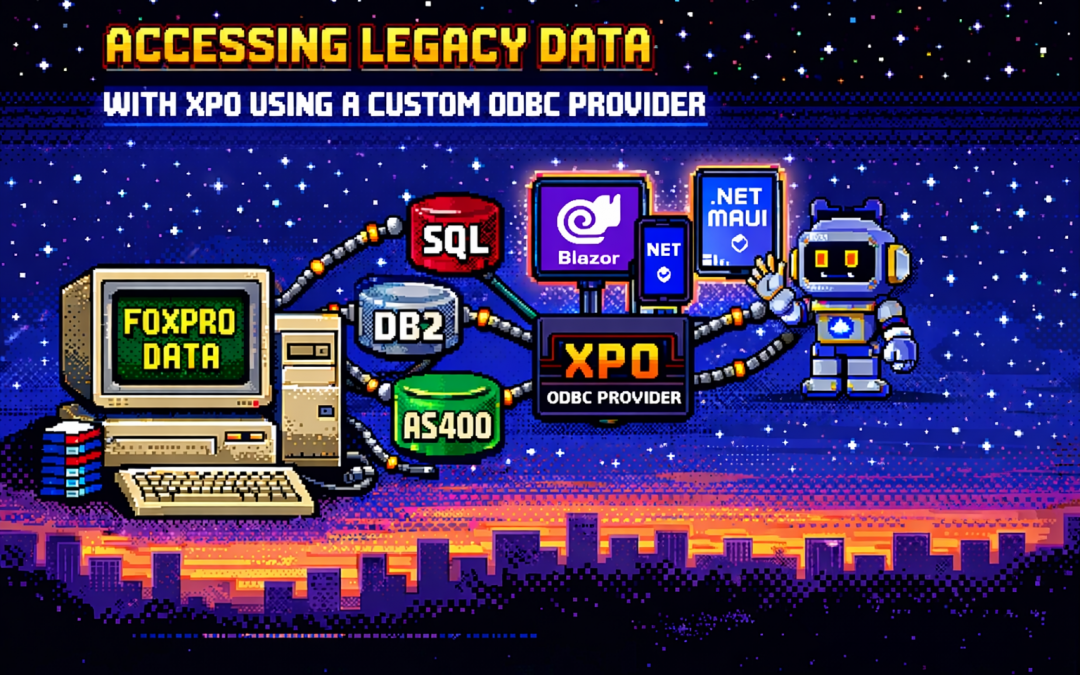

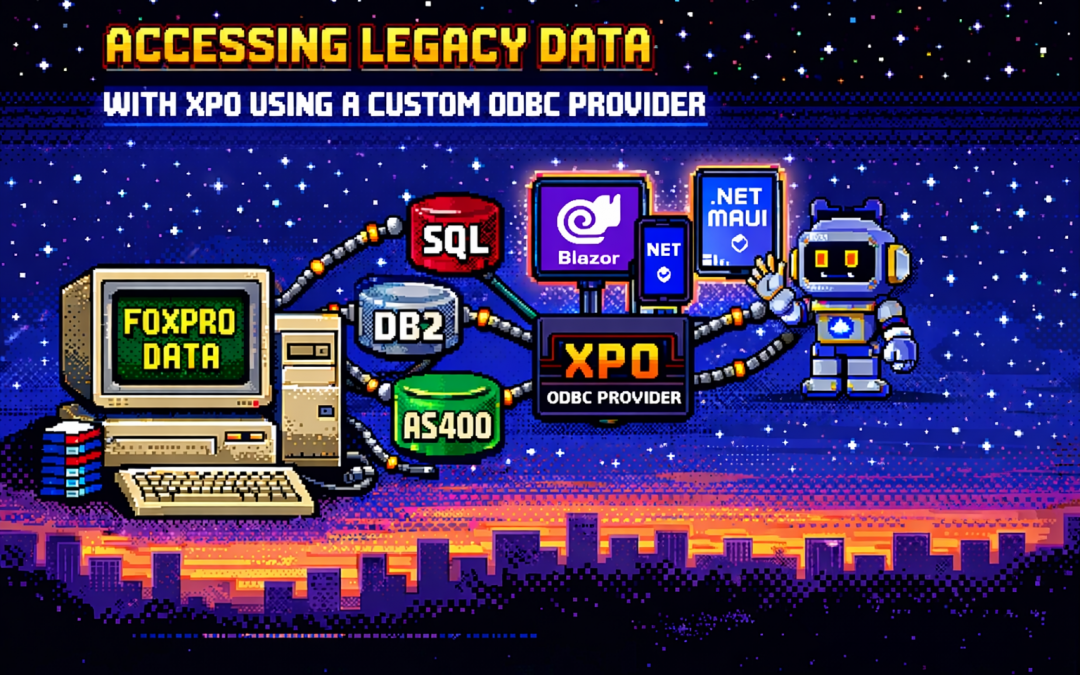

One of the recurring challenges in real-world systems is not building new software — it’s

integrating with software that already exists.

Legacy systems don’t disappear just because newer technologies are available. They survive because they work,

because they hold critical business data, and because replacing them is often risky, expensive, or simply not allowed.

This article explores a practical approach to accessing legacy data using XPO by leveraging ODBC,

not as a universal abstraction, but as a bridge when no modern provider exists.

The Reality of Legacy Systems

Many organizations still rely on systems built on technologies such as:

- FoxPro tables

- AS400 platforms

- DB2-based systems

- Proprietary or vendor-abandoned databases

In these scenarios, it’s common to find that:

- There is no modern .NET provider

- There is no ORM support

- There is an ODBC driver

That last point is crucial. ODBC often remains available long after official SDKs and providers have disappeared.

It becomes the last viable access path to critical data.

Why ORMs Struggle with Legacy Data

Modern ORMs assume a relatively friendly environment: a supported database engine, a known SQL dialect,

a compatible type system, and an actively maintained provider.

Legacy databases rarely meet those assumptions. As a result, teams are often forced to:

- Drop down to raw SQL

- Build ad-hoc data access layers

- Treat legacy data as a second-class citizen

This becomes especially painful in systems that already rely heavily on DevExpress XPO for persistence,

transactions, and domain modeling.

ODBC Is Not Magic — and That’s the Point

ODBC is often misunderstood.

Using ODBC does not mean:

- One provider works for every database

- SQL becomes standardized

- Type systems become compatible

Each ODBC-accessible database still has:

- Its own SQL dialect

- Its own limitations

- Its own data types

- Its own behavioral quirks

ODBC simply gives you a way in. It is a transport mechanism, not a universal language.

What an XPO ODBC Provider Really Is

When you implement an XPO provider on top of ODBC, you are not building a generic solution for all databases.

You are building a targeted adapter for a specific legacy system that happens to be reachable via ODBC.

This matters because ODBC is used here as a pragmatic trick:

- To connect to something you otherwise couldn’t

- To reuse an existing, stable access path

- To avoid rewriting or destabilizing legacy systems

The database still dictates the SQL dialect, supported features, and type system. Your provider must respect those constraints.

Why XPO Makes This Possible

XPO is not just an ORM — it is a provider-based persistence framework.

All SQL-capable XPO providers are built on top of a shared foundation, most notably:

ConnectionProviderSql

https://docs.devexpress.com/CoreLibraries/DevExpress.Xpo.DB.ConnectionProviderSql

This architecture allows you to reuse XPO’s core benefits:

- Object model

- Sessions and units of work

- Transaction handling

- Integration with domain logic

While customizing what legacy systems require:

- SQL generation

- Command execution

- Schema discovery

- Type mapping

Dialects and Type Systems Still Matter

Even when accessed through ODBC:

- FoxPro is not SQL Server

- DB2 is not PostgreSQL

- AS400 is not Oracle

Each system has its own:

- Date and time semantics

- Numeric precision rules

- String handling behavior

- Constraints and limits

An XPO ODBC provider must explicitly map database types, handle dialect-specific SQL,

and avoid assumptions about “standard SQL.” ODBC opens the door — it does not normalize what’s inside.

Real-World Experience: AS400 and DB2 in Production

This approach is not theoretical. Last year, we implemented a custom XPO provider using ODBC for

AS400 and DB2 systems in Mexico, where:

- No viable modern .NET provider existed

- The systems were deeply embedded in business operations

- ODBC was the only stable integration path

By introducing an XPO provider on top of ODBC, we were able to integrate legacy data into a modern .NET architecture,

preserve domain models and transactional behavior, and avoid rewriting or destabilizing existing systems.

The Hidden Advantage: Modern UI and AI Access

Once legacy data is exposed through XPO, something powerful happens: that data becomes immediately available to modern platforms.

- Blazor applications

- .NET MAUI mobile and desktop apps

- Background services

- Integration APIs

- AI agents and assistants

And you get this without rewriting the database, migrating the data, or changing the legacy system.

XPO becomes the adapter that allows decades-old data to participate in modern UI stacks, automated workflows,

and AI-driven experiences.

Why Not Just Use Raw ODBC?

Raw ODBC gives you rows, columns, and primitive values. XPO gives you domain objects, identity tracking,

relationships, transactions, and a consistent persistence model.

The goal is not to modernize the database. The goal is to modernize access to legacy data

so it can safely participate in modern architectures.

Closing Thought

An XPO ODBC provider is not a silver bullet. It will not magically unify SQL dialects, type systems, or database behavior.

But when used intentionally, it becomes a powerful bridge between systems that cannot be changed

and architectures that still need to evolve.

ODBC is the trick that lets you connect.

XPO is what makes that connection usable — everywhere, from Blazor UIs to AI agents.

by Joche Ojeda | Dec 23, 2025 | ADO, ADO.NET, C#

When I started working with computers, one of the tools that shaped my way of thinking as a developer was FoxPro.

At the time, FoxPro felt like a complete universe: database engine, forms, reports, and business logic all integrated into a single environment.

Looking back, FoxPro was effectively an application framework from the past—long before that term became common.

Accessing FoxPro data usually meant choosing between two paths:

- Direct FoxPro access – fast, tightly integrated, and fully aware of FoxPro’s features

- ODBC – a standardized way to access the data from outside the FoxPro ecosystem

This article focuses on that second option.

What Is ODBC?

ODBC (Open Database Connectivity) is a standardized API for accessing databases.

Instead of applications talking directly to a specific database engine, they talk to an ODBC driver,

which translates generic database calls into database-specific commands.

The promise was simple:

One API, many databases.

And for its time, this was revolutionary.

Supported Operating Systems and Use Cases

ODBC is still relevant today and supported across major platforms:

- Windows – native support, mature tooling

- Linux – via unixODBC and vendor drivers

- macOS – supported through driver managers

Typical use cases include:

- Legacy systems that must remain stable

- Reporting and BI tools

- Data migration and ETL pipelines

- Cross-vendor integrations

- Long-lived enterprise systems

ODBC excels where interoperability matters more than elegance.

The Lowest Common Denominator Problem

Although ODBC is a standard, it does not magically unify databases.

Each database has its own:

- SQL dialect

- Data types

- Functions

- Performance characteristics

ODBC standardizes access, not behavior.

You can absolutely open an ODBC connection and still:

- Call native database functions

- Use vendor-specific SQL

- Rely on engine-specific behavior

This makes ODBC flexible—but not truly database-agnostic.

ODBC vs True Abstraction Layers

This is where ODBC differs from ORMs or persistence frameworks that aim for full abstraction.

- ODBC: Gives you a common door and does not prevent database-specific usage

- ORM-style frameworks: Try to hide database differences and enforce a common conceptual model

ODBC does not protect you from database specificity—it permits it.

ODBC in .NET: Avoiding Native Database Dependencies

This is an often-overlooked advantage of ODBC, especially in .NET applications.

ADO.NET is interface-driven:

IDbConnectionIDbCommandIDataReader

However, each database requires its own concrete provider:

- SQL Server

- Oracle

- DB2

- Pervasive

- PostgreSQL

- MySQL

Each provider introduces:

- Native binaries

- Vendor SDKs

- Version compatibility issues

- Deployment complexity

Your code may be abstract — your deployment is not.

ODBC as a Binary Abstraction Layer

When using ODBC in .NET, your application depends on one provider only:

System.Data.Odbc

Database-specific dependencies are moved:

- Out of your application

- Into the operating system

- Into driver configuration

This turns ODBC into a dependency firewall.

Minimal .NET Example: ODBC vs Native Provider

Native ADO.NET Provider (Example: SQL Server)

using System.Data.SqlClient;

using var connection =

new SqlConnection("Server=.;Database=AppDb;Trusted_Connection=True;");

connection.Open();

Implications:

- Requires SQL Server client libraries

- Ties the binary to SQL Server

- Changing database = new provider + rebuild

ODBC Provider (Database-Agnostic Binary)

using System.Data.Odbc;

using var connection =

new OdbcConnection("DSN=AppDatabase");

connection.Open();

Implications:

- Same binary works for SQL Server, Oracle, DB2, etc.

- No vendor-specific DLLs in the app

- Database choice is externalized

The SQL inside the connection may still be database-specific — but your application binary is not.

Trade-Offs (And Why They’re Acceptable)

Using ODBC means:

- Fewer vendor-specific optimizations

- Possible performance differences

- Reliance on driver quality

But in exchange, you gain:

- Simpler deployments

- Easier migrations

- Longer application lifespan

- Reduced vendor lock-in

For many enterprise systems, this is a strategic win.

What’s Next – Phase 2: Customer Polish

Phase 1 is about making it work.

Phase 2 is about making it survivable for customers.

In Phase 2, ODBC shines by enabling:

- Zero-code database switching

- Cleaner installers

- Fewer runtime surprises

- Support for customer-controlled environments

- Reduced friction in on-prem deployments

This is where architecture meets reality.

Customers don’t care how elegant your abstractions are — they care that your software runs on their infrastructure without drama.

Project References

Minimal and explicit:

System.Data

System.Data.Odbc

Optional (native providers, when required):

System.Data.SqlClient

Oracle.ManagedDataAccess

IBM.Data.DB2

ODBC allows these to become optional, not mandatory.

Closing Thought

ODBC never promised purity.

It promised compatibility.

Just like FoxPro once gave us everything in one place, ODBC gave us a way out — without burning everything down.

Decades later, that trade-off still matters.

by Joche Ojeda | May 12, 2025 | C#, SivarErp

Welcome back to our ERP development series! In previous days, we’ve covered the foundational architecture, database design, and core entity structures for our accounting system. Today, we’re tackling an essential but often overlooked aspect of any enterprise software: data import and export capabilities.

Why is this important? Because no enterprise system exists in isolation. Companies need to move data between systems, migrate from legacy software, or simply handle batch data operations. In this article, we’ll build robust import/export services for the Chart of Accounts, demonstrating principles you can apply to any part of your ERP system.

The Importance of Data Exchange

Before diving into the code, let’s understand why dedicated import/export functionality matters:

- Data Migration – When companies adopt your ERP, they need to transfer existing data

- System Integration – ERPs need to exchange data with other business systems

- Batch Processing – Accountants often prepare data in spreadsheets before importing

- Backup & Transfer – Provides a simple way to backup or transfer configurations

- User Familiarity – Many users are comfortable working with CSV files

CSV (Comma-Separated Values) is our format of choice because it’s universally supported and easily edited in spreadsheet applications like Excel, which most business users are familiar with.

Our Implementation Approach

For our Chart of Accounts module, we’ll create:

- A service interface defining import/export operations

- A concrete implementation handling CSV parsing/generation

- Unit tests verifying all functionality

Our goal is to maintain clean separation of concerns, robust error handling, and clear validation rules.

Defining the Interface

First, we define a clear contract for our import/export service:

/// <summary>

/// Interface for chart of accounts import/export operations

/// </summary>

public interface IAccountImportExportService

{

/// <summary>

/// Imports accounts from a CSV file

/// </summary>

/// <param name="csvContent">Content of the CSV file as a string</param>

/// <param name="userName">User performing the operation</param>

/// <returns>Collection of imported accounts and any validation errors</returns>

Task<(IEnumerable<IAccount> ImportedAccounts, IEnumerable<string> Errors)> ImportFromCsvAsync(string csvContent, string userName);

/// <summary>

/// Exports accounts to a CSV format

/// </summary>

/// <param name="accounts">Accounts to export</param>

/// <returns>CSV content as a string</returns>

Task<string> ExportToCsvAsync(IEnumerable<IAccount> accounts);

}

Notice how we use C# tuples to return both the imported accounts and any validation errors from the import operation. This gives callers full insight into the operation’s results.

Implementing CSV Import

The import method is the more complex of the two, requiring:

- Parsing and validating the CSV structure

- Converting CSV data to domain objects

- Validating the created objects

- Reporting any errors along the way

Here’s our implementation approach:

public async Task<(IEnumerable<IAccount> ImportedAccounts, IEnumerable<string> Errors)> ImportFromCsvAsync(string csvContent, string userName)

{

List<AccountDto> importedAccounts = new List<AccountDto>();

List<string> errors = new List<string>();

if (string.IsNullOrEmpty(csvContent))

{

errors.Add("CSV content is empty");

return (importedAccounts, errors);

}

try

{

// Split the CSV into lines

string[] lines = csvContent.Split(new[] { "\r\n", "\r", "\n" }, StringSplitOptions.RemoveEmptyEntries);

if (lines.Length <= 1)

{

errors.Add("CSV file contains no data rows");

return (importedAccounts, errors);

}

// Assume first line is header

string[] headers = ParseCsvLine(lines[0]);

// Validate headers

if (!ValidateHeaders(headers, errors))

{

return (importedAccounts, errors);

}

// Process data rows

for (int i = 1; i < lines.Length; i++)

{

string[] fields = ParseCsvLine(lines[i]);

if (fields.Length != headers.Length)

{

errors.Add($"Line {i + 1}: Column count mismatch. Expected {headers.Length}, got {fields.Length}");

continue;

}

var account = CreateAccountFromCsvFields(headers, fields);

// Validate account

if (!_accountValidator.ValidateAccount(account))

{

errors.Add($"Line {i + 1}: Account validation failed for account {account.AccountName}");

continue;

}

// Set audit information

_auditService.SetCreationAudit(account, userName);

importedAccounts.Add(account);

}

return (importedAccounts, errors);

}

catch (Exception ex)

{

errors.Add($"Error importing CSV: {ex.Message}");

return (importedAccounts, errors);

}

}

Key aspects of this implementation:

- Early validation – We quickly detect and report basic issues like empty input

- Row-by-row processing – Each line is processed independently, allowing partial success

- Detailed error reporting – We collect specific errors with line numbers

- Domain validation – We apply business rules from

AccountValidator

- Audit trail – We set audit fields for each imported account

The ParseCsvLine method handles the complexities of CSV parsing, including quoted fields that may contain commas:

private string[] ParseCsvLine(string line)

{

List<string> fields = new List<string>();

bool inQuotes = false;

int startIndex = 0;

for (int i = 0; i < line.Length; i++)

{

if (line[i] == '"')

{

inQuotes = !inQuotes;

}

else if (line[i] == ',' && !inQuotes)

{

fields.Add(line.Substring(startIndex, i - startIndex).Trim().TrimStart('"').TrimEnd('"'));

startIndex = i + 1;

}

}

// Add the last field

fields.Add(line.Substring(startIndex).Trim().TrimStart('"').TrimEnd('"'));

return fields.ToArray();

}

Implementing CSV Export

The export method is simpler, converting domain objects to CSV format:

public Task<string> ExportToCsvAsync(IEnumerable<IAccount> accounts)

{

if (accounts == null || !accounts.Any())

{

return Task.FromResult(GetCsvHeader());

}

StringBuilder csvBuilder = new StringBuilder();

// Add header

csvBuilder.AppendLine(GetCsvHeader());

// Add data rows

foreach (var account in accounts)

{

csvBuilder.AppendLine(GetCsvRow(account));

}

return Task.FromResult(csvBuilder.ToString());

}

We take special care to handle edge cases like null or empty collections, making the API robust against improper usage.

Testing the Implementation

Our test suite verifies both the happy paths and various error conditions:

- Import validation – Tests for empty content, missing headers, etc.

- Export formatting – Tests for proper CSV generation, handling of special characters

- Round-trip integrity – Tests exporting and re-importing preserves data integrity

For example, here’s a round-trip test to verify data integrity:

[Test]

public async Task RoundTrip_ExportThenImport_PreservesAccounts()

{

// Arrange

var originalAccounts = new List<IAccount>

{

new AccountDto

{

Id = Guid.NewGuid(),

AccountName = "Cash",

OfficialCode = "11000",

AccountType = AccountType.Asset,

// other properties...

},

new AccountDto

{

Id = Guid.NewGuid(),

AccountName = "Accounts Receivable",

OfficialCode = "12000",

AccountType = AccountType.Asset,

// other properties...

}

};

// Act

string csv = await _importExportService.ExportToCsvAsync(originalAccounts);

var (importedAccounts, errors) = await _importExportService.ImportFromCsvAsync(csv, "Test User");

// Assert

Assert.That(errors, Is.Empty);

Assert.That(importedAccounts.Count(), Is.EqualTo(originalAccounts.Count));

// Check first account

var firstOriginal = originalAccounts[0];

var firstImported = importedAccounts.First();

Assert.That(firstImported.AccountName, Is.EqualTo(firstOriginal.AccountName));

Assert.That(firstImported.OfficialCode, Is.EqualTo(firstOriginal.OfficialCode));

Assert.That(firstImported.AccountType, Is.EqualTo(firstOriginal.AccountType));

// Check second account similarly...

}

Integration with the Broader System

This service isn’t meant to be used in isolation. In a complete ERP system, you’d typically:

- Add a controller to expose these operations via API endpoints

- Create UI components for file upload/download

- Implement progress reporting for larger imports

- Add transaction support to make imports atomic

- Include validation rules specific to your business domain

Design Patterns and Best Practices

Our implementation exemplifies several important patterns:

- Interface Segregation – The service has a focused, cohesive purpose

- Dependency Injection – We inject the

IAuditService rather than creating it

- Early Validation – We validate input before processing

- Detailed Error Reporting – We collect and return specific errors

- Defensive Programming – We handle edge cases and exceptions gracefully

Future Extensions

This pattern can be extended to other parts of your ERP system:

- Customer/Vendor Data – Import/export contact information

- Inventory Items – Handle product catalog updates

- Journal Entries – Process batch financial transactions

- Reports – Export financial data for external analysis

Conclusion

Data import/export capabilities are a critical component of any enterprise system. They bridge the gap between systems, facilitate migration, and support batch operations. By implementing these services with careful error handling and validation, we’ve added significant value to our ERP system.

In the next article, we’ll explore building financial reporting services to generate balance sheets, income statements, and other critical financial reports from our accounting data.

Stay tuned, and happy coding!

About Us

YouTube

https://www.youtube.com/c/JocheOjedaXAFXAMARINC

Our sites

Let’s discuss your XAF

This call/zoom will give you the opportunity to define the roadblocks in your current XAF solution. We can talk about performance, deployment or custom implementations. Together we will review you pain points and leave you with recommendations to get your app back in track

https://calendly.com/bitframeworks/bitframeworks-free-xaf-support-hour

Our free A.I courses on Udemy

by Joche Ojeda | May 5, 2025 | Boring systems, ERP

After returning home from an extended journey through the United States, Greece, and Turkey, I found myself contemplating a common challenge over my morning coffee. There are numerous recurring problems in system design and ORM (Object-Relational Mapping) implementation that developers face repeatedly.

To address these challenges, I’ve decided to tackle a system that most professionals are familiar with—an ERP (Enterprise Resource Planning) system—and develop a design that achieves three critical goals:

- Performance Speed: The system must be fast and responsive

- Technology Agnosticism: The architecture should be platform-independent

- Consistent Performance: The system should maintain its performance over time

Design Decisions

To achieve these goals, I’m implementing the following key design decisions:

- Utilizing the SOLID design principles to ensure maintainability and extensibility

- Building with C# and net9 to leverage its modern language features

- Creating an agnostic architecture that can be reimplemented in various technologies like DevExpress XAF or Entity Framework

Day 1: Foundational Structure

In this first article, I’ll propose an initial folder structure that may evolve as the system develops. I’ll also describe a set of base classes and interfaces that will form the foundation of our system.

You can find all the source code for this solution in the designated repository.

The Core Layer

Today we’re starting with the core layer—a set of interfaces that most entities will implement. The system design follows SOLID principles to ensure it can be easily reimplemented using different technologies.

Base Interfaces

Here’s the foundation of our interface hierarchy:

- IEntity: Core entity interface defining the Id property

- IAuditable: Interface for entities with audit information

- IArchivable: Interface for entities supporting soft delete

- IVersionable: Interface for entities with effective dating

- ITimeTrackable: Interface for entities requiring time tracking

Service Interfaces

To complement our entity interfaces, we’re also defining service interfaces:

- IAuditService: Interface for audit-related operations

- IArchiveService: Interface for archiving operations

Repo

egarim/SivarErp: Open Source ERP

Next Steps

In upcoming articles, I’ll expand on this foundation by implementing concrete classes, developing the domain layer, and demonstrating how this architecture can be applied to specific ERP modules.

The goal is to create a reference architecture that addresses the recurring challenges in system design while remaining adaptable to different technological implementations.

Stay tuned for the next installment where we’ll dive deeper into the implementation details of our core interfaces.

About Us

YouTube

https://www.youtube.com/c/JocheOjedaXAFXAMARINC

Our sites

Let’s discuss your XAF

This call/zoom will give you the opportunity to define the roadblocks in your current XAF solution. We can talk about performance, deployment or custom implementations. Together we will review you pain points and leave you with recommendations to get your app back in track

https://calendly.com/bitframeworks/bitframeworks-free-xaf-support-hour

Our free A.I courses on Udemy