by Joche Ojeda | Jan 8, 2026 | netframework

If you’ve ever worked on a traditional .NET Framework application — the kind that predates .NET Core and .NET 5+ — this story may feel painfully familiar.

I’m talking about classic .NET Framework 4.x applications (4.0, 4.5, 4.5.1, 4.5.2, 4.6, 4.6.1, 4.6.2, 4.7, 4.7.1, 4.7.2, 4.8, and the final release 4.8.1). These systems often live long, productive lives… and accumulate interesting technical debt along the way.

This particular system is written in C# and relies heavily on COM components to render video, audio, and PDF content. Under the hood, many of these components are based on technologies like DirectShow filters, ActiveX controls, or other native COM DLLs.

And that’s where the story begins.

The Setup: COM, DirectShow, and Registration

Unlike managed .NET assemblies, COM components don’t just live quietly next to your executable. They need to be registered in the system registry so Windows knows:

- What CLSID they expose

- Which DLL implements that CLSID

- Whether it’s 32-bit or 64-bit

- How it should be activated

For DirectShow-based components (very common for video/audio playback in legacy apps), registration is usually done manually during development using regsvr32.

Example:

regsvr32 MyVideoFilter.dll

To unregister:

regsvr32 /u MyVideoFilter.dll

Important detail that bites a lot of people:

- 32-bit DLLs must be registered using:

C:\Windows\SysWOW64\regsvr32.exe My32BitFilter.dll

- 64-bit DLLs must be registered using:

C:\Windows\System32\regsvr32.exe My64BitFilter.dll

Yes — the folder names are historically confusing.

Development Works… Until It Doesn’t

So here’s the usual development flow:

- You register all required COM DLLs on your development machine

- Visual Studio runs the app

- Video plays, audio works, PDFs render

- Everyone is happy

Then comes the next step.

“Let’s build an installer.”

The Installer Paradox

This is where the real battle story begins.

Your application installer (MSI, InstallShield, WiX, Inno Setup — pick your poison) now needs to:

- Copy the COM DLLs

- Register them during installation

- Unregister them during uninstall

This seems reasonable… until you test it.

The Loop From Hell

Here’s what happens in practice:

- You install your app for testing

- The installer registers its own copies of the COM DLLs

- Your development environment was using different copies (maybe newer, maybe local builds)

- Suddenly:

- Your source build stops working

- Visual Studio debugging breaks

- Another app on your machine mysteriously fails

Then you:

- Uninstall the app

- The installer unregisters the DLLs

- Now nothing works anymore

So you re-register the DLLs manually for development…

…and the cycle repeats.

The Battle Story: It Only Worked… Until It Didn’t

For a long time, this system appeared to work just fine.

Video played. Audio rendered. PDFs opened. No obvious errors.

What we didn’t realize at first was a dangerous hidden assumption:

The system only worked on machines where a previous version had already been installed.

Those older installations had left COM DLLs registered in the system — quietly, globally, and invisibly.

So when we deployed a new version without removing the old one:

- Everything looked fine

- No one suspected missing registrations

- The system passed casual testing

The illusion broke the moment we tried a clean installation.

On a fresh machine — no previous version, no leftover registry entries — the application suddenly failed:

- Components didn’t initialize

- Media rendering silently broke

- COM activation errors appeared only in Event Viewer

The installer claimed it was registering the DLLs.

In reality, it wasn’t doing it correctly — or at least not in the way the application actually needed.

That’s when we realized we were standing on years of accidental state.

Why This Happens

The core problem is simple but brutal:

COM registration is global and mutable.

There is:

- One registry

- One CLSID mapping

- One “active” DLL per COM component

Your development environment, your installed application, and your installer are all fighting over the same global state.

.NET Framework itself isn’t the villain here — it’s just sitting on top of an old Windows integration model that predates modern isolation concepts.

A New Player Enters: ARM64

Just when we thought the problem space was limited to x86 vs x64, another variable entered the scene.

One of the development machines was ARM64.

Modern Windows on ARM adds a new layer of complexity:

- ARM64 native processes

- x64 emulation

- x86 emulation on top of ARM64

From the outside, everything looks like it’s running on x64.

Under the hood, it’s not that simple.

Why This Makes COM Registration Worse

COM registration is architecture-specific:

- x86 DLLs register under one view of the registry

- x64 DLLs register under another

- ARM64 introduces yet another execution context

On Windows ARM:

System32 contains ARM64 binariesSysWOW64 contains x86 binaries- x64 binaries often run through emulation layers

So now the questions multiply:

- Which

regsvr32 did the installer call?

- Was it ARM64, x64, or x86?

- Did the app run natively, or under emulation?

- Did the COM DLL match the process architecture?

The result is a system where:

- Some things work on Intel machines

- Some things work on ARM machines

- Some things only work if another version was installed first

At this point, debugging stops being logical and starts being archaeological.

Why This Is So Common in .NET Framework 4.x Apps

Many enterprise and media-heavy applications built on:

- .NET Framework 4.0–4.8.1

- WinForms or WPF

- DirectShow or ActiveX components

were designed in an era where:

- Global COM registration was normal

- Side-by-side isolation was rare

- “Just register the DLL” was accepted practice

These systems work, but they’re fragile — especially on developer machines.

Where the Article Is Going Next

In the rest of this article series, we’ll look at:

- Why install-time registration is often a mistake

- How to isolate development vs runtime environments

- Techniques like:

- Dedicated dev VMs

- Registration-free COM (where possible)

- App-local COM deployment

- Clear ownership rules for installers

- How to survive (and maintain) legacy .NET Framework systems without losing your sanity

If you’ve ever broken your own development environment just by testing your installer — you’re not alone.

This is the cost of living at the intersection of managed code and unmanaged history.

by Joche Ojeda | Apr 20, 2025 | WSL

My Docker Adventure in Athens

Hello fellow tech enthusiasts!

I’m currently in Athens, Greece, enjoying a lovely Easter Sunday, when I decided to tackle a little tech project – getting Docker running on my Microsoft Surface with an ARM64 CPU. If you’ve ever tried to do this, you might know it’s not as straightforward as it sounds!

After some research, I discovered something important: there’s a difference between Docker Enterprise and Docker Community Edition (CE). While the enterprise version doesn’t support ARM64 yet, Docker CE does have versions for both ARM64 and x64 architectures. Perfect!

The WSL2 Solution

I initially tried to install Docker directly on Windows, but quickly ran into roadblocks. That’s when I decided to try the Windows Subsystem for Linux (WSL2) route instead. Spoiler alert: it worked like a charm!

While you won’t get the nice Docker Desktop UI that Windows users might be accustomed to, the command line interface through WSL2 works perfectly fine. After all, Docker was born on Linux, so running it in a Linux environment makes sense!

Step-by-Step Guide to Installing Docker CE on WSL2

Here’s how I got Docker CE up and running on my Surface using WSL2:

Step 1: Update Your Packages

First, make sure your WSL2 system is up to date:

sudo apt update && sudo apt upgrade -y

Step 2: Install Required Packages

Install the necessary packages to use HTTPS repositories:

sudo apt install -y apt-transport-https ca-certificates curl software-properties-common gnupg lsb-release

Step 3: Add Docker’s Official GPG Key

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg

Step 4: Set Up the Stable Docker Repository

echo "deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

Step 5: Update APT with the New Repository

sudo apt update

Step 6: Install Docker CE

sudo apt install -y docker-ce docker-ce-cli containerd.io

Step 7: Start the Docker Service

sudo service docker start

Step 8: Add Your User to the Docker Group

This allows you to run Docker without sudo:

sudo usermod -aG docker $USER

Step 9: Apply the Group Changes

Either log out and back in, or run:

newgrp docker

Step 10: Verify Your Installation

docker --version

docker run hello-world

Pro Tip!

If you want Docker to start automatically when you launch WSL2, add the service start command to your .bashrc or .zshrc file:

echo "sudo service docker start" >> ~/.bashrc

Final Thoughts

What started as a potentially frustrating experience turned into a surprisingly smooth process. WSL2 continues to impress me with how well it bridges the Windows and Linux worlds. If you have a Surface or any other ARM64-based Windows device and need to run Docker, I highly recommend the WSL2 approach.

Have you tried running Docker on an ARM device? What was your experience like? Let me know in the comments below!

Happy containerizing! 🐳

by Joche Ojeda | May 24, 2024 | CPU

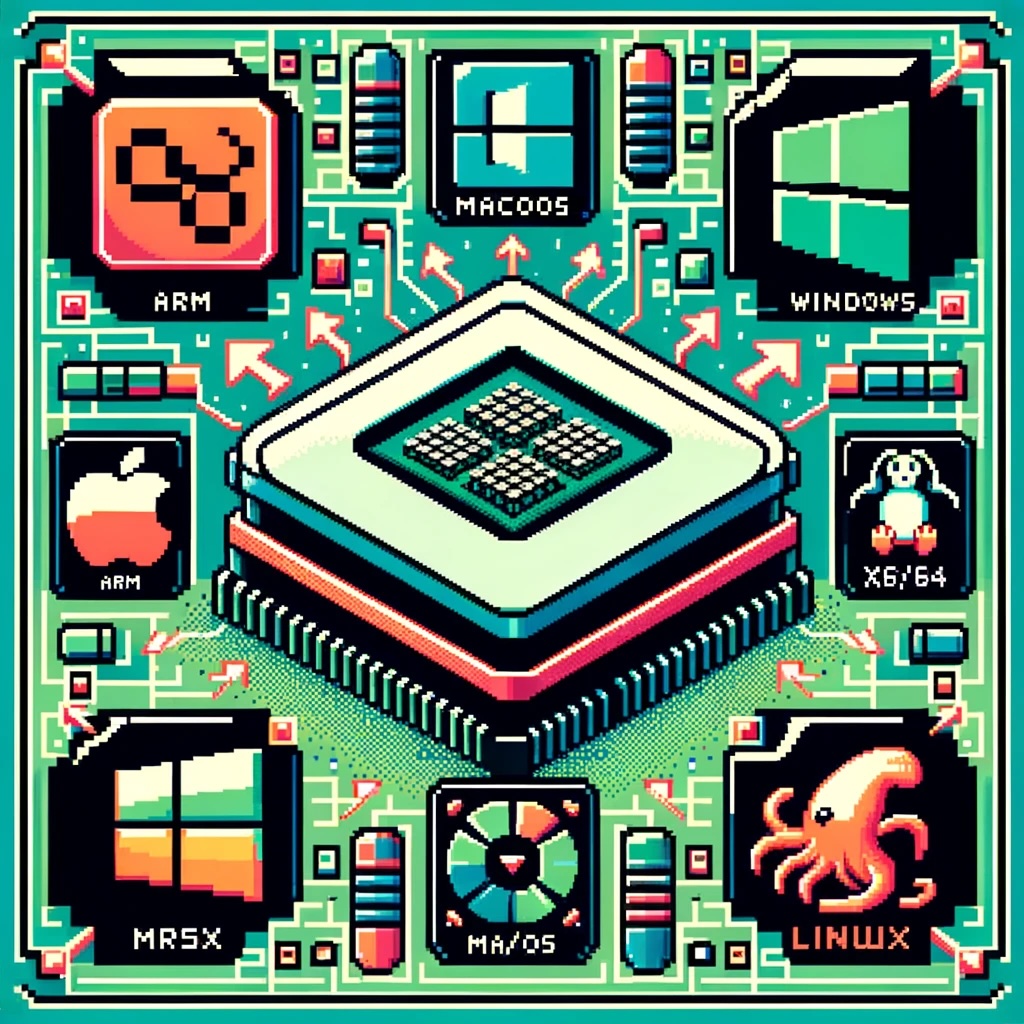

As technology continues to evolve, the need for seamless interoperability between different hardware architectures becomes increasingly crucial. One significant aspect of this interoperability is the ability to run software compiled for one CPU architecture on another. This blog post explores how CPU translation layers enable the execution of ARM-compiled applications on x86/x64 platforms across Windows, macOS, and Linux.

Windows OS: Bridging ARM and x86/x64

Microsoft’s approach to running ARM applications on x86/x64 hardware is embodied in Windows 10 on ARM. This system allows ARM-based devices to run Windows efficiently, incorporating several key technologies:

- WOW (Windows on Windows): This subsystem provides compatibility for 32-bit x86 applications on ARM devices through a mix of emulation and native execution.

- x86/x64 Emulation: Windows 10 and 11 on ARM can emulate both x86 and x64 applications. The emulation layer dynamically translates x86/x64 instructions to ARM instructions at runtime, using Just-In-Time (JIT) compilation techniques to convert code as it is needed.

- Native ARM64 Support: To avoid the performance overhead associated with emulation, Microsoft encourages developers to compile their applications directly for ARM64.

macOS: The Power of Rosetta 2

Apple’s transition from Intel (x86/x64) to Apple Silicon (ARM) has been facilitated by Rosetta 2, a sophisticated translation layer designed to make this process as smooth as possible:

- Dynamic Binary Translation: Rosetta 2 converts x86_64 instructions to ARM instructions on-the-fly, enabling users to run x86_64 applications transparently on ARM-based Macs.

- Ahead-of-Time (AOT) Compilation: For some applications, Rosetta 2 can pre-translate x86_64 binaries to ARM before execution, boosting performance.

- Universal Binaries: Apple encourages developers to use Universal Binaries, which include both x86_64 and ARM64 executables, allowing the operating system to select the appropriate version based on the hardware.

Linux: Flexibility with QEMU

Linux’s open-source nature provides a versatile approach to CPU translation through QEMU, a widely-used emulator that supports various architectures, including ARM to x86/x64:

- User-mode Emulation: QEMU can run individual Linux executables compiled for ARM on an x86/x64 host by translating system calls and CPU instructions.

- Full-system Emulation: It can also emulate a complete ARM system, enabling an x86/x64 machine to run an ARM operating system and its applications.

- Performance Enhancements: QEMU’s performance can be significantly improved with KVM (Kernel-based Virtual Machine), which allows near-native execution speed for guest instructions.

How Translation Layers Work

The translation process involves several steps to ensure smooth execution of applications across different architectures:

- Instruction Fetch: The emulator fetches instructions from the source (ARM) binary.

- Instruction Decode: The fetched instructions are decoded into a format understandable by the translation layer.

- Instruction Translation:

- JIT Compilation: Converts source instructions into target (x86/x64) instructions in real-time.

- Caching: Frequently used translations are cached to avoid repeated translation.

- Execution: The translated instructions are executed on the target CPU.

- System Calls and Libraries:

- System Call Translation: System calls from the source architecture are translated to their equivalents on the host architecture.

- Library Mapping: Shared libraries from the source architecture are mapped to their counterparts on the host system.

Performance Considerations

- Overhead: Emulation introduces overhead, which can impact performance, particularly for compute-intensive applications.

- Optimization Strategies: Techniques like ahead-of-time compilation, caching, and promoting native support help mitigate performance penalties.

- Hardware Support: Some ARM processors include hardware extensions to accelerate binary translation.

Developer Considerations

For developers, ensuring compatibility and performance across different architectures involves several best practices:

- Cross-Compilation: Developers should compile their applications for multiple architectures to provide native performance on each platform.

- Extensive Testing: Applications must be tested thoroughly in both native and emulated environments to ensure compatibility and performance.

Conclusion

CPU translation layers are pivotal for maintaining software compatibility across different hardware architectures. By leveraging sophisticated techniques such as dynamic binary translation, JIT compilation, and system call translation, these layers bridge the gap between ARM and x86/x64 architectures on Windows, macOS, and Linux. As technology continues to advance, these translation layers will play an increasingly important role in enabling seamless interoperability across diverse computing environments.