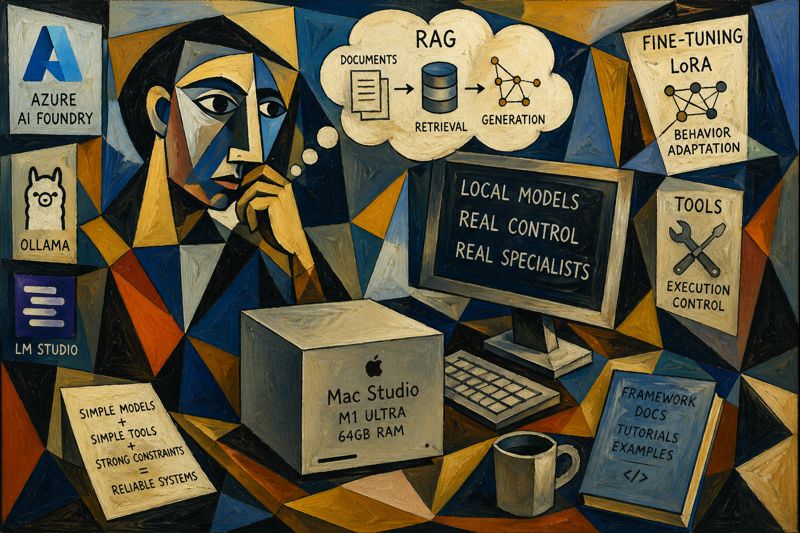

I was recently in Arizona and got my hands on a Mac Studio (M1 Ultra, 64GB RAM).

Naturally, I didn’t use it for video editing or music production. I used it for what actually matters:

Running local AI models and trying to bend them to my will.

The Setup

I went all-in and installed three main tools:

The goal was simple:

Try every possible way to make open-source models behave like something useful.

Not just chatting. Not just “vibe coding.” Actual controlled behavior.

The Reality Check

After working with frontier models like those from OpenAI and Anthropic, one thing becomes obvious:

They’re just better.

Not because of magic.

Because of:

- more training data

- better curated data

- more compute

- more iterations

- better eval loops

It’s not one thing. It’s everything combined.

You feel it immediately:

- better reasoning

- better language coverage

- better consistency

- fewer weird edge cases

So What’s the Point of Local Models?

This is where things get interesting.

Instead of trying to compete with frontier models, I flipped the approach:

Don’t build a general intelligence. Build a specialist.

The Core Idea: Single-Task Specialists

Instead of asking:

“How do I make this model as good as GPT or Claude?”

Ask:

“How do I make this model extremely good at ONE thing?”

That changes everything.

The Stack of Techniques

1. Base Model Selection

Small to mid-size models:

- 7B–8B range works best locally

2. RAG (Retrieval-Augmented Generation)

Inject knowledge at runtime:

- docs

- codebases

- tutorials

- architecture notes

3. Behavior Tuning (LoRA / fine-tuning)

Instead of teaching knowledge, teach patterns:

- how to respond

- how to structure outputs

- how to write code

- how to fix bugs

4. Tooling / Skills

Give the model bounded capabilities:

- limited toolset

- deterministic flows

- controlled execution

The Big Insight

The breakthrough for me was this:

Knowledge ≠ Behavior

You don’t train the model to know everything. You train it to behave correctly within a narrow domain.

Example: Framework Specialist

Let’s say you take a framework like DevExpress XAF.

You can build a local specialist by combining:

Knowledge Layer (RAG)

- official docs

- tutorials

- your internal patterns

- real-world examples

Behavior Layer (fine-tuning)

- “user asks → correct implementation”

- “buggy code → fixed code”

- “requirement → test → solution”

Tool Layer

- code generation

- validation scripts

- test runners

Now instead of a generic model, you get:

A XAF expert agent that runs locally.

Why This Works

Because you’re not fighting the limitations anymore.

You’re embracing them.

Small models:

- are faster

- are cheaper

- are controllable

- are predictable

And when you narrow the scope:

They become surprisingly good.

The Direction I’m Exploring

What really got my attention is this idea:

Simple models + simple tools + strong constraints = reliable systems

Not:

- giant models

- huge toolchains

- uncontrolled agents

But:

- small models

- few tools

- explicit rules

- tight loops

What’s Next

I’m going to keep experimenting and document everything:

- different models (Qwen, Llama, etc.)

- different training approaches (LoRA, no training, RAG-only)

- different tool strategies

- evaluation setups

- failure cases (this is the important part)

Final Thought

Frontier models are incredible.

But local models are hackable.

And that’s the real advantage.

You can shape them.

You can constrain them.

You can turn them into specialists.

And for real-world systems, that might actually be more valuable than raw intelligence.