by Joche Ojeda | Oct 16, 2025 | Oqtane

OK, I’ve been wanting to write this article for a few days now, but I’ve been vibing a lot — writing tons of prototypes and working on my Oqtane research. This morning I got blocked by GitHub Copilot because I hit the rate limit, so I can’t use it for a few hours. I figured that’s a sign to take a break and write some articles instead.

Actually, I’m not really “writing” — I’m using the Windows dictation feature (Windows key + H). So right now, I’m just having coffee and talking to my computer. I’m still in El Salvador with my family, and it’s like 5:00 AM here. My mom probably thinks I’ve gone crazy because I’ve been talking to my computer a lot lately. Even when I’m coding, I use dictation instead of typing, because sometimes it’s just easier to express yourself when you talk. When you type, you tend to shorten things, but when you talk, you can go on forever, right?

Anyway, this article is about Oqtane, specifically something that’s been super useful for me — how to set up a silent installation. Usually, when you download the Oqtane source or use the templates to create a new project or solution, and then run the server project, you’ll see the setup wizard first. That’s where you configure the database, email, host password, default theme, and all that.

Since I’ve been doing tons of prototypes, I’ve seen that setup screen thousands of times per day. So I downloaded the Oqtane source and started digging through it — using Copilot to generate guides whenever I got stuck. Honestly, the best way to learn is always by looking at the source code. I learned that the hard way years ago with XAF from DevExpress — there was no documentation back then, so I had to figure everything out manually and even assemble the projects myself because they weren’t in one solution. With Oqtane, it’s way simpler: everything’s in one place, just a few main projects.

Now, when I run into a problem, I just open the source code and tell Copilot, “OK, this is what I want to do. Help me figure it out.” Sometimes it goes completely wrong (as all AI tools do), but sometimes it nails it and produces a really good guide.

So the guide below was generated with Copilot, and it’s been super useful. I’ve been using it a lot lately, and I think it’ll save you a ton of time if you’re doing automated deployment with Oqtane.

I don’t want to take more of your time, so here it goes — I hope it helps you as much as it helped me.

Oqtane Installation Configuration Guide

This guide explains the configuration options available in the appsettings.json file under the Installation section for automated installation and default site settings.

Overview

The Installation section in appsettings.json controls the automated installation process and default settings for new sites in Oqtane. These settings are particularly useful for:

- Automated installations – Deploy Oqtane without manual configuration

- Development environments – Quickly spin up new instances

- Multi-tenant deployments – Standardize new site creation

- CI/CD pipelines – Automate deployment processes

Configuration Structure

{

"Installation": {

"DefaultAlias": "",

"HostPassword": "",

"HostEmail": "",

"SiteTemplate": "",

"DefaultTheme": "",

"DefaultContainer": ""

}

}

| Key |

Purpose |

Required |

DefaultAlias |

Initial site URL(s) |

✅ |

HostPassword |

Super admin password |

✅ |

HostEmail |

Super admin email |

✅ |

SiteTemplate |

Initial site structure |

Optional |

DefaultTheme |

Site appearance |

Optional |

DefaultContainer |

Module wrapper style |

Optional |

SiteTemplate

A Site Template defines the initial structure and content of a new site, including pages, modules, folders, and navigation.

"SiteTemplate": "Oqtane.Infrastructure.SiteTemplates.DefaultSiteTemplate, Oqtane.Server"

Default options:

- DefaultSiteTemplate – Home, Privacy, example content

- EmptySiteTemplate – Minimal, clean slate

- AdminSiteTemplate – Internal use

If empty, Oqtane uses the default template automatically.

DefaultTheme

A Theme controls the visual appearance and layout of your site (page structure, navigation, header/footer, and styling).

"DefaultTheme": "Oqtane.Themes.OqtaneTheme.Default, Oqtane.Client"

Built-in themes:

- Oqtane Theme (default) – clean and responsive

- Blazor Theme – Blazor-branded styling

- Bootswatch variants – Cerulean, Cosmo, Darkly, Flatly, Lux, etc.

- Corporate Theme – business layout

If left blank, it defaults to the Oqtane Theme.

DefaultContainer

A Container is the wrapper around each module, controlling how titles, buttons, and borders look.

"DefaultContainer": "Oqtane.Themes.OqtaneTheme.Container, Oqtane.Client"

Common containers:

- OqtaneTheme.Container – standard and responsive

- AdminContainer – management modules

- Theme-specific containers – match the chosen theme

Defaults automatically if left empty.

Example Configurations

Minimal Configuration

{

"Installation": {

"DefaultAlias": "localhost",

"HostPassword": "YourSecurePassword123!",

"HostEmail": "admin@example.com"

}

}

Custom Theme and Container

{

"Installation": {

"DefaultAlias": "localhost",

"HostPassword": "YourSecurePassword123!",

"HostEmail": "admin@example.com",

"SiteTemplate": "Oqtane.Infrastructure.SiteTemplates.DefaultSiteTemplate, Oqtane.Server",

"DefaultTheme": "Oqtane.Theme.Bootswatch.Flatly.Default, Oqtane.Theme.Bootswatch.Oqtane",

"DefaultContainer": "Oqtane.Theme.Bootswatch.Flatly.Container, Oqtane.Theme.Bootswatch.Oqtane"

}

}

Troubleshooting

- Settings ignored during installation: Ensure all required fields are filled (

DefaultAlias, HostPassword, HostEmail).

- Theme not found: Check assembly reference and type name.

- Container displays incorrectly: Use a container matching your theme.

- Site template creates no pages: Ensure your template returns valid page definitions.

Logs can be found in Logs/oqtane-log-YYYYMMDD.txt.

Best Practices

- Match your theme and container.

- Leave defaults empty unless customization is needed.

- Test in development first.

- Document any custom templates or themes.

- Use environment-specific appsettings (e.g.

appsettings.Development.json).

Summary

The Installation configuration in appsettings.json lets you fully automate your Oqtane setup.

- SiteTemplate: defines structure

- DefaultTheme: defines appearance

- DefaultContainer: defines module layout

Empty values use defaults, and you can override them for automation, branding, or custom scenarios.

by Joche Ojeda | Oct 5, 2025 | Oqtane, ORM

In this article, I’ll show you what to do after you’ve obtained and opened an Oqtane solution. Specifically, we’ll go through two different ways to set up your database for the first time.

- Using the setup wizard — this option appears automatically the first time you run the application.

- Configuring it manually — by directly editing the

appsettings.json file to skip the wizard.

Both methods achieve the same result. The only difference is that, if you configure the database manually, you won’t see the setup wizard during startup.

Step 1: Running the Application for the First Time

Once your solution is open in Visual Studio, set the Server project as the startup project. Then run it just as you would with any ASP.NET Core application.

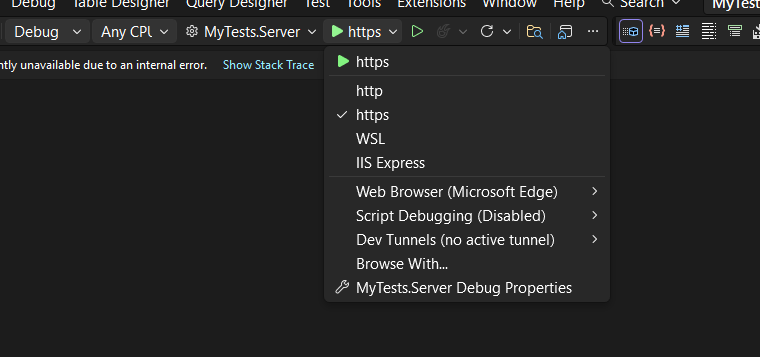

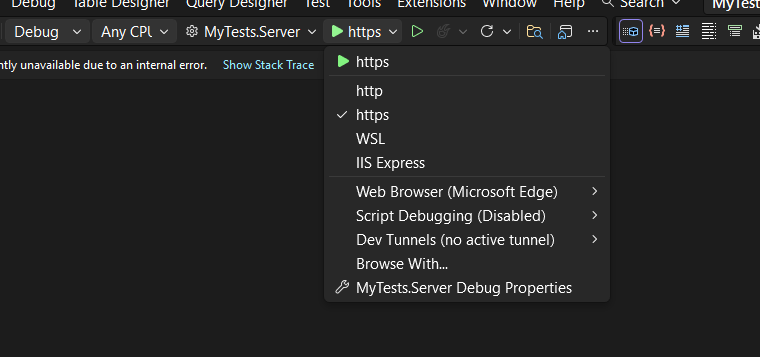

You’ll notice several run options — I recommend using the HTTPS version instead of IIS Express (I stopped using IIS Express because it doesn’t work well on ARM-based computers).

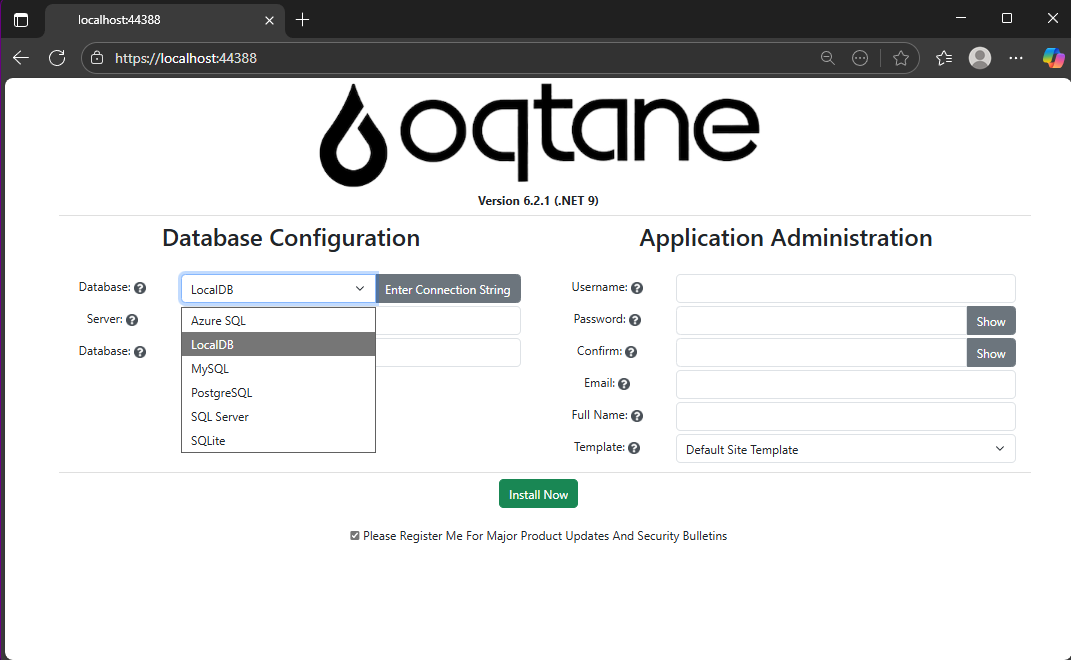

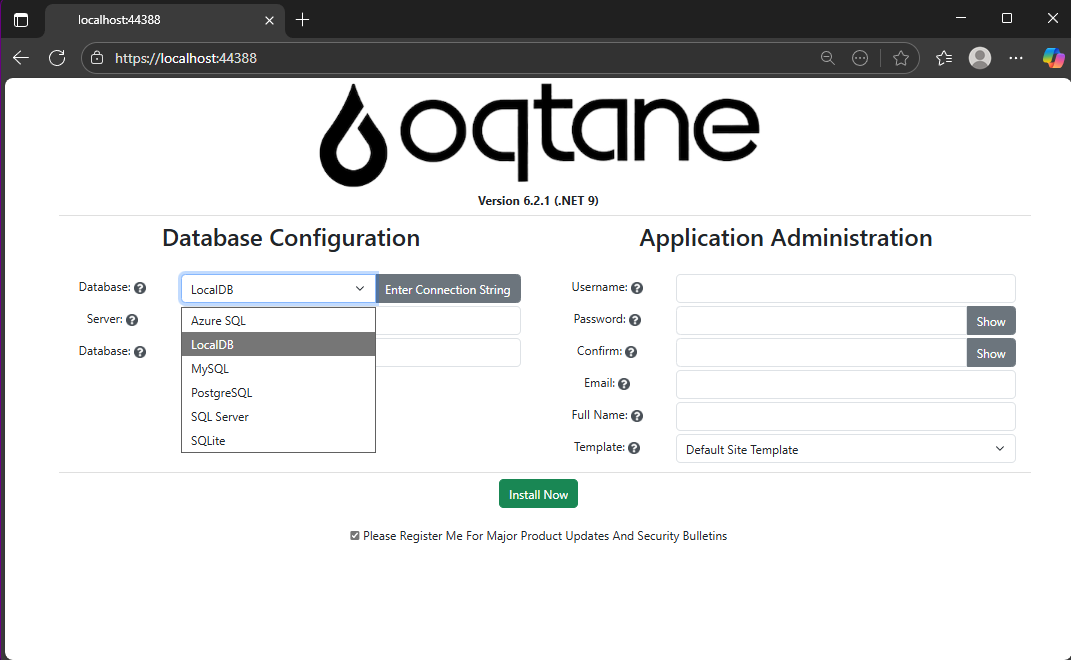

When you run the application for the first time and your settings file is still empty, you’ll see the Database Setup Wizard. As shown in the image, the wizard allows you to select a database provider and configure it through a form.

There’s also an option to paste your connection string directly. Make sure it’s a valid Entity Framework Core connection string.

After that, fill in the admin user’s details — username, email, and password — and you’re done. Once this process completes, you’ll have a working Oqtane installation.

Step 2: Setting Up the Database Manually

If you prefer to skip the wizard, you can configure the database manually. To do this, open the appsettings.json file and add the following parameters:

{

"DefaultDBType": "Oqtane.Database.Sqlite.SqliteDatabase, Oqtane.Server",

"ConnectionStrings": {

"DefaultConnection": "Data Source=Oqtane-202510052045.db;"

},

"Installation": {

"DefaultAlias": "https://localhost:44388",

"HostPassword": "MyPasswor25!",

"HostEmail": "joche@myemail.com",

"SiteTemplate": "",

"DefaultTheme": "",

"DefaultContainer": ""

}

}

Here you need to specify:

- The database provider type (e.g., SQLite, SQL Server, PostgreSQL, etc.)

- The connection string

- The admin email and password for the first user — known as the host user (essentially the root or super admin).

This is the method I usually use now since I’ve set up Oqtane so many times recently that I’ve grown tired of the wizard. However, if you’re new to Oqtane, the wizard is a great way to get started.

Wrapping Up

That’s it for this setup guide! By now, you should have a running Oqtane installation configured either through the setup wizard or manually via the configuration file. Both methods give you a solid foundation to start exploring what Oqtane can do.

In the next article, we’ll dive into the Oqtane backend, exploring how the framework handles modules, data, and the underlying architecture that makes it flexible and powerful. Stay tuned — things are about to get interesting!

by Joche Ojeda | Aug 5, 2025 | Auth, Linux, Ubuntu, WSL

In modern application development, managing user authentication and authorization across multiple systems has become a significant challenge. Keycloak emerges as a compelling solution to address these identity management complexities, offering particular value for .NET developers seeking flexible authentication options.

What is Keycloak?

Keycloak is an open-source Identity and Access Management (IAM) solution developed by Red Hat. It functions as a centralized authentication and authorization server that manages user identities and controls access across multiple applications and services within an organization.

Rather than each application handling its own user authentication independently, Keycloak provides a unified identity provider that enables Single Sign-On (SSO) capabilities. Users authenticate once with Keycloak and gain seamless access to all authorized applications without repeated login prompts.

Core Functionality

Keycloak serves as a comprehensive identity management platform that handles several critical functions. It manages user authentication through various methods including traditional username/password combinations, multi-factor authentication, and social login integration with providers like Google, Facebook, and GitHub.

Beyond authentication, Keycloak provides robust authorization capabilities, controlling what authenticated users can access within applications through role-based access control and fine-grained permissions. The platform supports industry-standard protocols including OpenID Connect, OAuth 2.0, and SAML 2.0, ensuring compatibility with a wide range of applications and services.

User federation capabilities allow Keycloak to integrate with existing user directories such as LDAP and Active Directory, enabling organizations to leverage their current user stores rather than requiring complete migration to new systems.

The Problem Keycloak Addresses

Modern users often experience “authentication fatigue” – the exhaustion that comes from repeatedly logging into multiple systems throughout their workday. A typical enterprise user might need to authenticate with email systems, project management tools, CRM platforms, cloud storage, HR portals, and various internal applications, each potentially requiring different credentials and authentication flows.

This fragmentation leads to several problems: users struggle with password management across multiple systems, productivity decreases due to time spent on authentication processes, security risks increase as users resort to password reuse or weak passwords, and IT support costs rise due to frequent password reset requests.

Keycloak eliminates these friction points by providing seamless SSO while simultaneously improving security through centralized identity management and consistent security policies.

Keycloak and .NET Integration

For .NET developers, Keycloak offers excellent compatibility through its support of standard authentication protocols. The platform’s adherence to OpenID Connect and OAuth 2.0 standards means it integrates naturally with .NET applications using Microsoft’s built-in authentication middleware.

.NET Core and .NET 5+ applications can integrate with Keycloak using the Microsoft.AspNetCore.Authentication.OpenIdConnect package, while older .NET Framework applications can utilize OWIN middleware. Blazor applications, both Server and WebAssembly variants, support the same integration patterns, and Web APIs can be secured using JWT tokens issued by Keycloak.

The integration process typically involves configuring authentication middleware in the .NET application to communicate with Keycloak’s endpoints, establishing client credentials, and defining appropriate scopes and redirect URIs. This standards-based approach ensures that .NET developers can leverage their existing knowledge of authentication patterns while benefiting from Keycloak’s advanced identity management features.

Benefits for .NET Development

Keycloak offers several advantages for .NET developers and organizations. As an open-source solution, it provides cost-effectiveness compared to proprietary alternatives while offering extensive customization capabilities that proprietary solutions often restrict.

The platform reduces development time by handling complex authentication scenarios out-of-the-box, allowing developers to focus on business logic rather than identity management infrastructure. Security benefits include centralized policy management, regular security updates, and implementation of industry best practices.

Keycloak’s vendor-neutral approach provides flexibility for organizations using multiple cloud providers or seeking to avoid vendor lock-in. The solution scales effectively through clustered deployments and supports high-availability configurations suitable for enterprise environments.

Comparison with Microsoft Solutions

When compared to Microsoft’s identity offerings like Entra ID (formerly Azure AD), Keycloak presents different trade-offs. Microsoft’s solutions provide seamless integration within the Microsoft ecosystem and offer managed services with minimal maintenance requirements, but come with subscription costs and potential vendor lock-in considerations.

Keycloak, conversely, offers complete control over deployment and data, extensive customization options, and freedom from licensing fees. However, it requires organizations to manage their own infrastructure and maintain the necessary technical expertise.

When Keycloak Makes Sense

Keycloak represents an ideal choice for .NET developers and organizations that prioritize flexibility, cost control, and customization capabilities. It’s particularly suitable for scenarios involving multiple cloud providers, integration with diverse systems, or requirements for extensive branding and workflow customization.

Organizations with the technical expertise to manage infrastructure and those seeking vendor independence will find Keycloak’s open-source model advantageous. The solution also appeals to teams building applications that need to work across different technology stacks and cloud environments.

Conclusion

Keycloak stands as a robust, flexible identity management solution that integrates seamlessly with .NET applications through standard authentication protocols. Its open-source nature, comprehensive feature set, and standards-based approach make it a compelling alternative to proprietary identity management solutions.

For .NET developers seeking powerful identity management capabilities without vendor lock-in, Keycloak provides the tools necessary to implement secure, scalable authentication solutions while maintaining the flexibility to adapt to changing requirements and diverse technology environments.

by Joche Ojeda | Apr 2, 2025 | Testing

In the last days, I have been dealing with a chat prototype that uses SignalR. I’ve been trying to follow the test-driven development (TDD) approach as I like this design pattern. I always try to find a way to test my code and define use cases so that when I’m refactoring or writing code, as long as the tests pass, I know everything is fine.

When doing ORM-related problems, testing is relatively easy because you can set up a memory provider, have a clean database, perform your operations, and then revert to normal. But when testing APIs, there are several approaches.

Some approaches are more like unit tests where you get a controller and directly pass values by mocking them. However, I prefer tests that are more like integration tests – for example, I want to test a scenario where I send a message to a chat and verify that the message send event was real. I want to show the complete set of moving parts and make sure they work together.

In this article, I want to explore how to do this type of test with REST APIs by creating a test host server. This test host creates two important things: a handler and an HTTP client. If you use the HTTP client, each HTTP operation (POST, GET, etc.) will be sent to the controllers that are set up for the test host. For the test host, you do the same configuration as you would for any other host – you can use a startup class or add the services you need and configure them.

I wanted to do the same for SignalR chat applications. In this case, you don’t need the HTTP client; you need the handler. This means that each request you make using that handler will be directed to the hub hosted on the HTTP test host.

Here’s the code that shows how to create the test host:

// ARRANGE

// Build a test server

var hostBuilder = new HostBuilder()

.ConfigureWebHost(webHost =>

{

webHost.UseTestServer();

webHost.UseStartup<Startup>();

});

var host = await hostBuilder.StartAsync();

//Create a test server

var server = host.GetTestServer();

And now the code for handling SignalR connections:

// Create SignalR connection

var connection = new HubConnectionBuilder()

.WithUrl("http://localhost/chathub", options =>

{

// Set up the connection to use the test server

options.HttpMessageHandlerFactory = _ => server.CreateHandler();

})

.Build();

string receivedUser = null;

string receivedMessage = null;

// Set up a handler for received messages

connection.On<string, string>("ReceiveMessage", (user, message) =>

{

receivedUser = user;

receivedMessage = message;

});

//if we take a closer look, we can see the creation of the test handler "server.CreateHandler"

var connection = new HubConnectionBuilder() .WithUrl("http://localhost/chathub", options =>

{

// Set up the connection to use the test server

options.HttpMessageHandlerFactory = _ => server.CreateHandler();

}) .Build();

Now let’s open a SignalR connection and see if we can connect to our test server:

string receivedUser = null;

string receivedMessage = null;

// Set up a handler for received messages

connection.On<string, string>("ReceiveMessage", (user, message) =>

{

receivedUser = user;

receivedMessage = message;

});

// ACT

// Start the connection

await connection.StartAsync();

// Send a test message through the hub

await connection.InvokeAsync("SendMessage", "TestUser", "Hello SignalR");

// Wait a moment for the message to be processed

await Task.Delay(100);

// ASSERT

// Verify the message was received correctly

Assert.That("TestUser"==receivedUser);

Assert.That("Hello SignalR"== receivedMessage);

// Clean up

await connection.DisposeAsync();

You can find the complete source of this example here: https://github.com/egarim/TestingSignalR/blob/master/UnitTest1.cs

by Joche Ojeda | Mar 12, 2025 | dotnet, http, netcore, netframework, network, WebServers

Last week, I was diving into Uno Platform to understand its UI paradigms. What particularly caught my attention is Uno’s ability to render a webapp using WebAssembly (WASM). Having worked with WASM apps before, I’m all too familiar with the challenges of connecting to data sources and handling persistence within these applications.

My Previous WASM Struggles

About a year ago, I faced a significant challenge: connecting a desktop WebAssembly app to an old WCF webservice. Despite having the CORS settings correctly configured (or so I thought), I simply couldn’t establish a connection from the WASM app to the server. I spent days troubleshooting both the WCF service and another ASMX service, but both attempts failed. Eventually, I had to resort to webserver proxies to achieve my goal.

This experience left me somewhat traumatized by the mere mention of “connecting WASM with an API.” However, the time came to face this challenge again during my weekend experiments.

A Pleasant Surprise with Uno Platform

This weekend, I wanted to connect a XAF REST API to an Uno Platform client. To my surprise, it turned out to be incredibly straightforward. I successfully performed this procedure twice: once with a XAF REST API and once with the API included in the Uno app template. The ease of this integration was a refreshing change from my previous struggles.

Understanding CORS and Why It Matters for WASM Apps

To understand why my previous attempts failed and my recent ones succeeded, it’s important to grasp what CORS is and why it’s crucial for WebAssembly applications.

What is CORS?

CORS (Cross-Origin Resource Sharing) is a security feature implemented by web browsers that restricts web pages from making requests to a domain different from the one that served the original web page. It’s an HTTP-header based mechanism that allows a server to indicate which origins (domains, schemes, or ports) other than its own are permitted to load resources.

The Same-Origin Policy

Browsers enforce a security restriction called the “same-origin policy” which prevents a website from one origin from requesting resources from another origin. An origin consists of:

- Protocol (HTTP, HTTPS)

- Domain name

- Port number

For example, if your website is hosted at https://myapp.com, it cannot make AJAX requests to https://myapi.com without the server explicitly allowing it through CORS.

Why CORS is Required for Blazor WebAssembly

Blazor WebAssembly (which uses similar principles to Uno Platform’s WASM implementation) is fundamentally different from Blazor Server in how it operates:

- Separate Deployment: Blazor WebAssembly apps are fully downloaded to the client’s browser and run entirely in the browser using WebAssembly. They’re typically hosted on a different server or domain than your API.

- Client-Side Execution: Since all code runs in the browser, when your Blazor WebAssembly app makes HTTP requests to your API, they’re treated as cross-origin requests if the API is hosted on a different domain, port, or protocol.

- Browser Security: Modern browsers block these cross-origin requests by default unless the server (your API) explicitly permits them via CORS headers.

Implementing CORS in Startup.cs

The solution to these CORS issues lies in properly configuring your server. In your Startup.cs file, you can configure CORS as follows:

public void ConfigureServices(IServiceCollection services) {

services.AddCors(options => {

options.AddPolicy("AllowBlazorApp",

builder => {

builder.WithOrigins("https://localhost:5000") // Replace with your Blazor app's URL

.AllowAnyHeader()

.AllowAnyMethod();

});

});

// Other service configurations...

}

public void Configure(IApplicationBuilder app, IWebHostEnvironment env) {

// Other middleware configurations...

app.UseCors("AllowBlazorApp");

// Other middleware configurations...

}

Conclusion

My journey with connecting WebAssembly applications to APIs has had its ups and downs. What once seemed like an insurmountable challenge has now become much more manageable, especially with platforms like Uno that simplify the process. Understanding CORS and implementing it correctly is crucial for successful WASM-to-API communication.

If you’re working with WebAssembly applications and facing similar challenges, I hope my experience helps you avoid some of the pitfalls I encountered along the way.

About Us

YouTube

https://www.youtube.com/c/JocheOjedaXAFXAMARINC

Our sites

Let’s discuss your XAF

https://www.udemy.com/course/microsoft-ai-extensions/

Our free A.I courses on Udemy